Weekly Top Picks #117

OpenAI's main quest / Anthropic Trumps the DoD / The worst sales pitch ever/ ARC-AGI 3, Spud, and Mythos / The tragedy of AI writing / This is a disaster, so have fun

Hey there, I’m Alberto! 👋 Each week, I publish long-form AI analysis covering culture, philosophy, and business for The Algorithmic Bridge. Paid subscribers also get Monday how-to guides and Friday news commentary. I publish occasional extra articles. If you’d like to become a paid subscriber, here’s a button for that:

I’ve removed the Trust & Safety section. These reviews run up to 4,000 words, and I don’t think anyone has time to read that much, so this will bring the word count down (this one is only around 2,800!).

THE WEEK IN AI AT A GLANCE

Money & Business: OpenAI admits Anthropic ate its lunch and pivots the whole company to catch up.

Geopolitics: A federal judge tells the Pentagon it can't punish an AI company (Anthropic) for talking to the press.

Work & Workers: The Atlantic's March cover asks what happens when nobody's in charge of the biggest labor disruption in history.

Products & Capabilities: New models are coming right as a new benchmark (ARC-AGI 3) proved the old ones aren't as smart as we thought.

Culture & Society: AI can't write like Shakespeare, but most people aren't reading Shakespeare anyway, so why bother?

Philosophy: AI is an epistemic disaster, so we may as well have some fun.

THE WEEK IN THE ALGORITHMIC BRIDGE

(FREE) How to Survive the AI Age: A Concrete Guide: a holistic view of problems and solutions about AI that are 1) concrete and 2) unique.

(PAID) OpenAI’s Dead: Self-descriptive.

(FREE) The One Thing I Use AI For That Actually Makes Me Smarter: A Socratic dialogue about the value of debating your ideas with AI.

(FREE) It’s AI, so I Didn’t Read: The new anti-AI movement and why it won’t work despite the good intentions.

MONEY & BUSINESS

OpenAI is done chasing side quests

Fidji Simo, OpenAI’s CEO of applications, told staff last week that the company’s leadership is in the process of choosing what to cut, per the Wall Street Journal.

Over the past year, OpenAI shipped a browser (Atlas), e-commerce features inside ChatGPT, Sora as a standalone social video app, and it’s working on a hardware device. All of that is now being pulled back so the company can concentrate on coding and enterprise, which is, you guessed it, Anthropic’s territory. Simo’s framing: “We cannot miss this moment because we are distracted by side quests. We really have to nail productivity in general and particularly productivity on the business front.”

I wrote in November that OpenAI's over-diversification was a strategic mistake—”OpenAI is trying too many things at once”—that sometimes a one-trick pony beats a jack of all trades. This announcement is the closest thing to an admission we’ll get. I emphasized this point last week in an article appropriately titled “OpenAI’s Dead.”

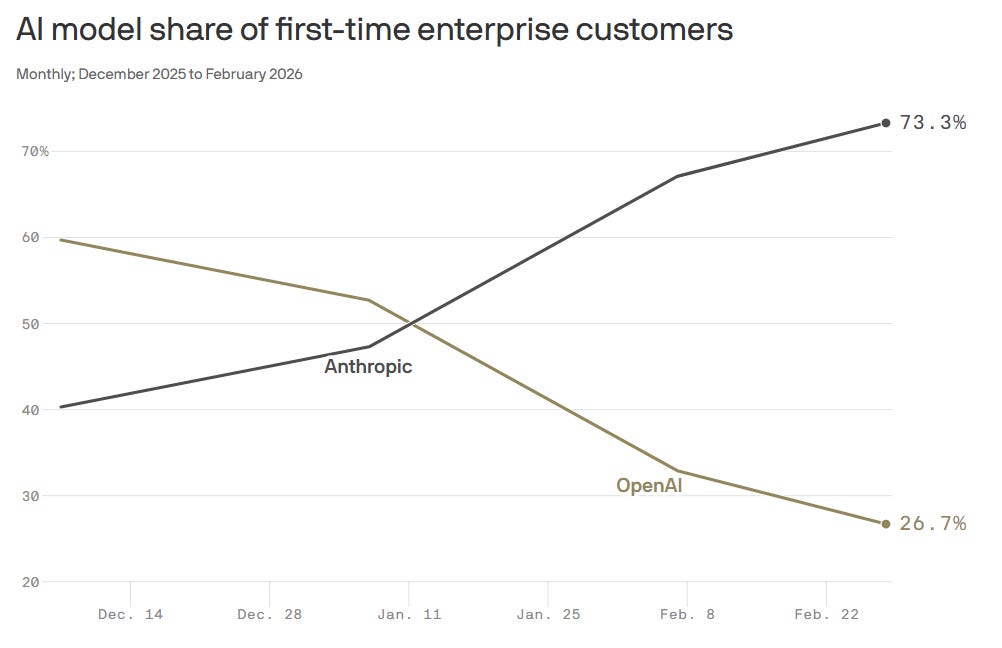

Anthropic, which the WSJ calls “the dominant AI provider for businesses” (it is indeed), built that lead largely on Claude Code and Cowork (and on being loyal to Americans). Simo told staff Anthropic’s success should serve as a “wake-up call” (she means a “code red”).

Meanwhile, Sora usage went nowhere after some early boost (now being fully discontinued as a product and as a model). None of the side quests—browser, TikTok clone, image generation, device, custom chips—generated enough traction or leverage to justify the scattered compute and engineering attention they demanded (why are they playing with short-form video feeds when AGI is at the doors, right?).

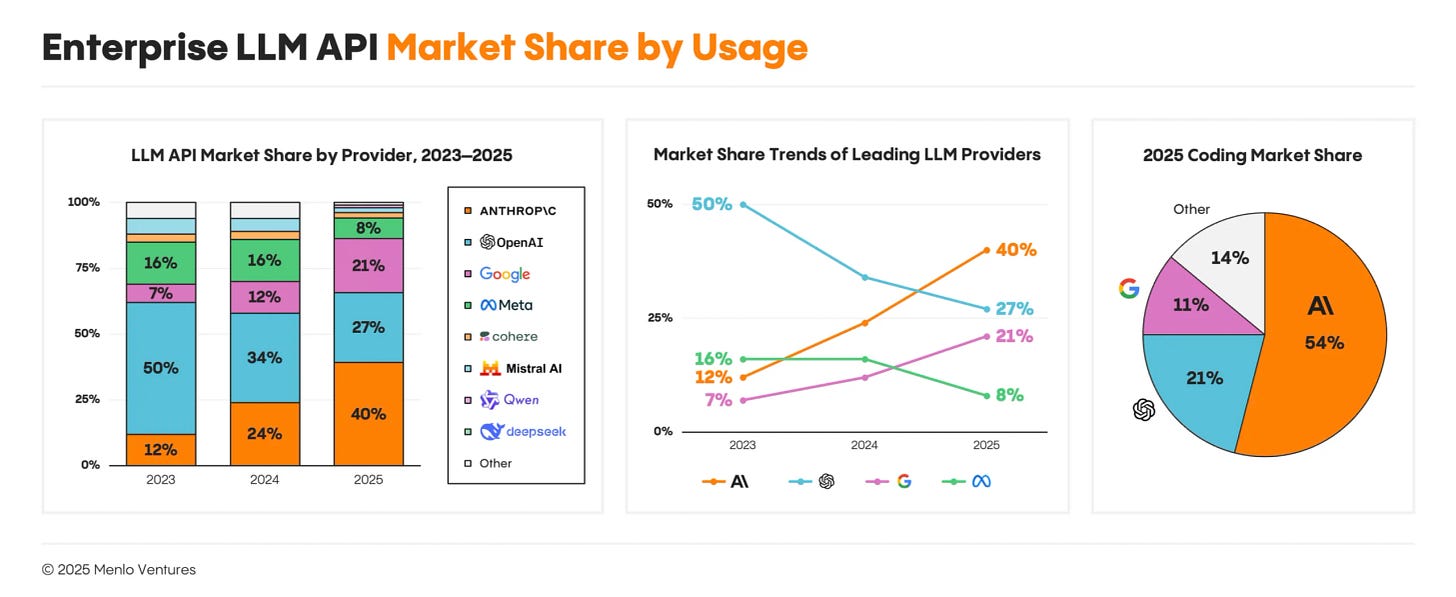

What makes this pivot especially urgent is the revenue picture. OpenAI has 900 million weekly active users and a strong consumer brand. Anthropic has a fraction of that visibility. And yet Anthropic has roughly the same revenue and leads OpenAI in enterprise market share, 40% to 27%, according to Menlo Ventures. Coding is the killer app, and developers prefer Claude. OpenAI regained some ground with a new Codex release and GPT-5.4 earlier this month (Simo says Codex now has more than two million weekly active users, up nearly four times since January), and intends to further reduce the gap with “Spud,” but that’s catch-up.

Is OpenAI the Yahoo of Anthropic’s Google?

“We are very much acting as if it’s a code red. I don’t think necessarily declaring codes for everything makes a ton of sense.” (There it is!)

Separately, Reuters reported that OpenAI is in advanced talks with private equity firms, including TPG, Advent, Bain, and Brookfield to form a joint venture valued at roughly $10 billion before the new investment. The PE firms would distribute OpenAI’s enterprise products across their portfolio companies. That’s a play to accelerate corporate adoption through existing relationships, but it also signals that organic enterprise growth has not been working so far.

Does this focus on the enterprise by both OpenAI and Anthropic mean they are leaving consumer AI to Google (and, to some degree, Meta)? Is this how a near-AGI company behaves, by pivoting the entire company a 180-degree turn? Is this related to the stagnation of Stargate? Or to Microsoft’s threats over Amazon’s deal?

I know Sam Altman has always had a stressful job as OpenAI CEO, but right now, more than ever before, I would rather not be in his shoes.

Sources: WSJ, Axios, Reuters, Fidji Simo

GEOPOLITICS

Anthropic Trumps the DoD

The Wall Street Journal reported that a US federal judge “halted the Trump administration’s designation of Anthropic as a supply-chain risk,” ruling that the move was First Amendment retaliation, not national security policy. Judge Rita Lin pointed to internal Defense Department records showing the designation happened because of Anthropic's “hostile manner through the press.” If the Pentagon didn't like Anthropic's contract terms, she argued, it could simply stop using Claude.

(Backstory: Anthropic wanted assurances its models wouldn't be used in fully autonomous weapons or domestic surveillance. The Pentagon said existing law already covered that and pushed for unrestricted use for all lawful use. When negotiations broke down, Defense Secretary Hegseth designated the company a supply-chain risk, and Trump directed all federal agencies to stop working with Anthropic. Hegseth also ordered contractors to drop the company, a move whose legal authority the judge found dubious enough to block explicitly.)

Two things worth noting.

First, this injunction required the judge to find Anthropic likely to succeed on the merits. That’s not a guarantee, but still a substantive assessment that the constitutional argument holds. The evidentiary record (internal DoD documents linking the designation to press criticism) is the kind of evidence that's very hard to explain away at trial. (You can read a more informed take from Dean Ball here or Samuel Roland here.)

Second, Trump doesn’t like the people, the judges, or whoever telling him what he can or can’t do, but the precedent is clear: “Trump always chickens out.” Whether it’s tariffs, TikTok, the Iran war, or Anthropic’s supply chain risk designation. A chicken is a chicken outward but also inward. (This Trumpian behavior is so well-known that markets move according to this heuristic; probably not the legacy Trump wanted to leave behind.)

My bet is this will end as another item on the list of anecdotes that, years from now, will remind us of how lamentable and farcical this administration was.

Sources: The Wall Street Journal

WORK & WORKERS

The Atlantic on AI and jobs

Josh Tyrangiel’s Atlantic cover story (from this past February) starts in 1869, when Massachusetts decided to start counting what the Industrial Revolution was doing to workers. That counting impulse eventually became the Bureau of Labor Statistics. The BLS is now underfunded, understaffed, and has just lost its commissioner. Meaning, America built institutions to measure disruption, then let them rot right before the greatest disruption in history—allegedly—hit us with all its might.

The economists Tyrangiel interviews mostly agree on one thing: it’s too early to see AI in the employment data (which is not the same to say that it’s too early to see AI in the actual workforce). They disagree about what that means, though.

Austan Goolsbee and Daron Acemoglu think it means slow adoption, historical precedent, the usual friction of legacy systems and human stubbornness. Anton Korinek thinks they’re reading the rearview mirror as the road turns; they are reading the data well, but not so much the nature of this technology: “Machines have always been dumb, and that’s why we don’t trust them and it’s always taken time to roll them out. But if they’re smarter than us, in many ways they can roll themselves out.”

The CEOs went quiet after a brief season of public candor about layoffs. Tyrangiel couldn’t get interviews with executives from Walmart, Amazon, Ford, Anthropic, Stripe, or Waymo. Even the Business Roundtable—an organization that exists to speak on these issues—had nothing to say. The big question that Tyrangiel doesn’t quite answer in his feature is at the core of this incoherence between spreadsheet numbers and real life: Are we approaching “the kind of disruption that can be managed with statistics—or the kind that creates statistics no one can bear to count.”

Meanwhile, in the statistics department…

A working paper by a different bureau, the National Bureau of Economic Research, surveyed 750 CFOs: only 44% plan AI-related cuts this year, totaling about 0.4% of U.S. employment (roughly 502,000 jobs). A ninefold jump from last year’s 55,000, but nowhere near the apocalyptic predictions.

The authors also documented what they call a productivity paradox, invoking Solow’s 1987 line about computers: executives feel more productive, but the revenue data doesn’t show it yet. The gap between what CEOs say on stage and what CFOs report in surveys is wide enough to drive an entire policy debate through. When will we start to say: “Well, this AI thing is not quite working as intended!”

Some economists, like Goolsbee, say it will take “years” for AI to register in productivity statistics or the labor market, which is fine. Others, like Korinek, say it will happen “as soon as this year.” Others, like Autor, say that, whatever it takes, the slower it happens, the better for workers.

There’s time before the macro and micro stories match, but for now, one thing is clear, which Tyrangiel, PR hat on, nails with this observation: “AI is unpopular. CEOs who talk about job cuts are even less popular. So maybe shut up about AI and jobs?”

As Noah Smith says, AI has the worst sales pitch ever.

Sources: The Atlantic, NBER, Noahpinion

PRODUCTS & CAPABILITIES

New models vs new benchmarks

This is probably the most exciting section this week.

Keep reading with a 7-day free trial

Subscribe to The Algorithmic Bridge to keep reading this post and get 7 days of free access to the full post archives.