How to Survive the AI Age: A Concrete Guide

Let’s fix this annoying anxiety once and for all

Hey there, I’m Alberto! 👋 Each week, I publish long-form AI analysis covering culture, philosophy, and business for The Algorithmic Bridge. Paid subscribers also get Monday how-to guides and Friday news commentary. I publish occasional extra articles and experimental essays. If you’d like to become a paid subscriber, here’s a button for that:

This is perhaps the most important guide I’ve written so far. For that reason, I’m keeping it 100% accessible. It’s a compressed listicle version of all the timely advice I’ve given you in the past months. Please, pay it forward by resharing with as many people as you can.

You guys received my last article with a spike of serious anxiety. I apologize. I understand.

For those of you who didn’t read it, let me summarize the thesis (borrowed from David Oks): new technologies that change the world—like AI will presumably do—don’t do it by automating tasks you do now, but by making them unnecessary; the future of your job is not AI writing your emails for you but those emails not need sending in the first place.

This is hardly specific to AI; it’s how tech paradigm shifts work. ATMs barely displaced branch bankers but iPhones and apps and digital banking killed the entire career. Automation didn’t decimate traditional banking; sheer pointlessness did.

AI putting you out of a job by automating your tasks is understandable—in the sense that it’s something you can “see coming” and “defend from”—but if the ground itself shifts so violently that your career falls through the cracks, well, that is harder to visualize and to fend off. And yet, when you look back to history, you realize most jobs indeed fell through the cracks of time to be forgotten.

Then I added a cherry on top: AI is that, but unlike other tech, it is also the thing that builds the next paradigm. So, bottom line: AI is making 1) our tasks irrelevant and 2) also us.

All in all, your anxiety is both reasonable and relatable. I share it with you. I also don’t know what’s coming. When you don’t see a threat coming (e.g., COVID), you just accept it after the fact, but anticipation is the mother of anxiety. We suffer from constant anticipation with AI and the industry leaders aren’t helping. The usual advice for how to fare with this—not only for jobs but for “all things AI”—is bad.

It’s worse than bad—it’s false security in the form of abstract ideas that only make sense for those who already know them. Typical examples are: prepare for the unknown, be adaptive, develop equanimity, learn to learn, and, of course, get ahead in AI. Or its more anxiety-inducing cousin: don’t fall behind.

This is ~ok in the sense that it’s not wrong advice, but “be adaptive” means something only to someone who’s already adaptive and thus doesn’t really need to hear it. Same thing for the others; they presuppose the inner architecture and mindset they prescribe. The words are technically correct and functionally empty. You don’t tell someone who’s drowning to “just swim.” Unfortunately, that’s what you will get from AI leaders: “Oh, sure, we’re making your job—your career—extinct, so, you know, try to be flexible.”

Anyway, this guide is my attempt to fix this situation insofar as it can be fixed (I hate to say this but AI is coming whether you like it or not and that’s beyond fixing). I’ve been working on a guide like this for a while—most of it is a compression of things I’ve been writing about lately—but it was my last article that made me realize that, on the one hand, people really, really need this and, on the other hand, I don’t have a solid answer either. So this is not me performing as a guru—there are no gurus for the unknown—but me thinking out loud my own anxieties, in case it helps you.

My goal is to give us both a holistic view of problems and solutions that are 1) concrete and thus actionable and 2) unique in the sense that they don’t sound like they could apply to anything in life, as does the usual advice.

The list has 8 items and it’s solely about work/jobs because it’s the biggest worry for most people (who has time to worry about disinformation when no food is on your plate, right?). I think it’s possible to make similar lists for other themes (e.g., info diet, cognitive autonomy, life and relationships, education etc.) but will leave them for future posts if you like this format. Hope reading this helps you as much as it helped me to write it.

I. Talk to someone who lived through an industry collapse

The best way to face the unknown is to make it known. People say “this time is different” about AI, and they’re right in some ways, but the emotional experience of watching your career become uncertain is ancient. This insight is the inspiration of this guide and thus deserves to go first. Ask an old uncle who worked in print when people used to read books, or a nephew who got a job as a developer three years ago and was let go last month… whoever it is, there are some who went through a version of what a lot of people, maybe you, are about to go through.

The specifics of the story are unimportant—historical disruptions all have idiosyncratic and contingent and haphazard features—but the arc is constant; the “shape of the thing.” Let me do a parallel with books.

There’s a denial phase (AI won’t take my job, I’m just better / this paper thingy is brittle, we’ll be back to writing on dead sheep skin), a bargaining phase (AI might take parts of my job, but the parts that make me feel most human are, of course, safe / handwritten books are more artistic and, honestly, why would you want a personal library?), and so on through the stages of grief that end with a period of indecision, provisionality, uncertainty (I will become a plumber, actually / So Gutenberg won and now everyone will be able to read: what a tragedy).

That’s where we are right now. The disruption feels life-threatening but how many has this planet and its inhabitants endured so far! You can read about these stories in books—ha, the irony—but it lands differently when a person you trust tells you. (Some disruptions are barely survivable, like the Black Death or the year 536 DC, but I don’t think AI is one of those. Some people do but that’s another topic.)

The goal is not to have a recipe on how to survive AI (there is no such recipe, I’m afraid) but the reassurance that transitions—the liminal period between two technological paradigms—are survivable. As things unfold, you’ll come back to that story and appreciate the similarities. History doesn’t repeat, but it rhymes, so make sure you know what rhyme you’re on for. Unprecedented needn’t be the same as unintelligible.

The best preparation for an unprecedented crisis is hearing how someone survived a different one.

II. Don’t obsess about “AI skills”

A surprising thing to say for someone who writes about how to learn AI, right? Coming from someone who recently wrote that you need to be able to defend that you have “AI skills” in your resume or an interview. Therein lies the difference: you must have AI skills, but you must not let AI skills have you; you shall dominate AI but don’t let AI dominate you.

I’ve been playing with AI models and tools for almost a decade. Is it fair to expect the same level of savviness that I have from anyone? No. “Learn AI skills” is like someone in 1995 saying “learn internet skills.” It’s technically correct, but it means nothing. Where do you even start? Most people who try end up taking some introductory course that teaches them what an LLM is, which is like learning how an engine works when what you needed was to learn to drive or, conversely, they pick up the first “100 best prompts” tutorial, which is like handing you a traffic signs manual.

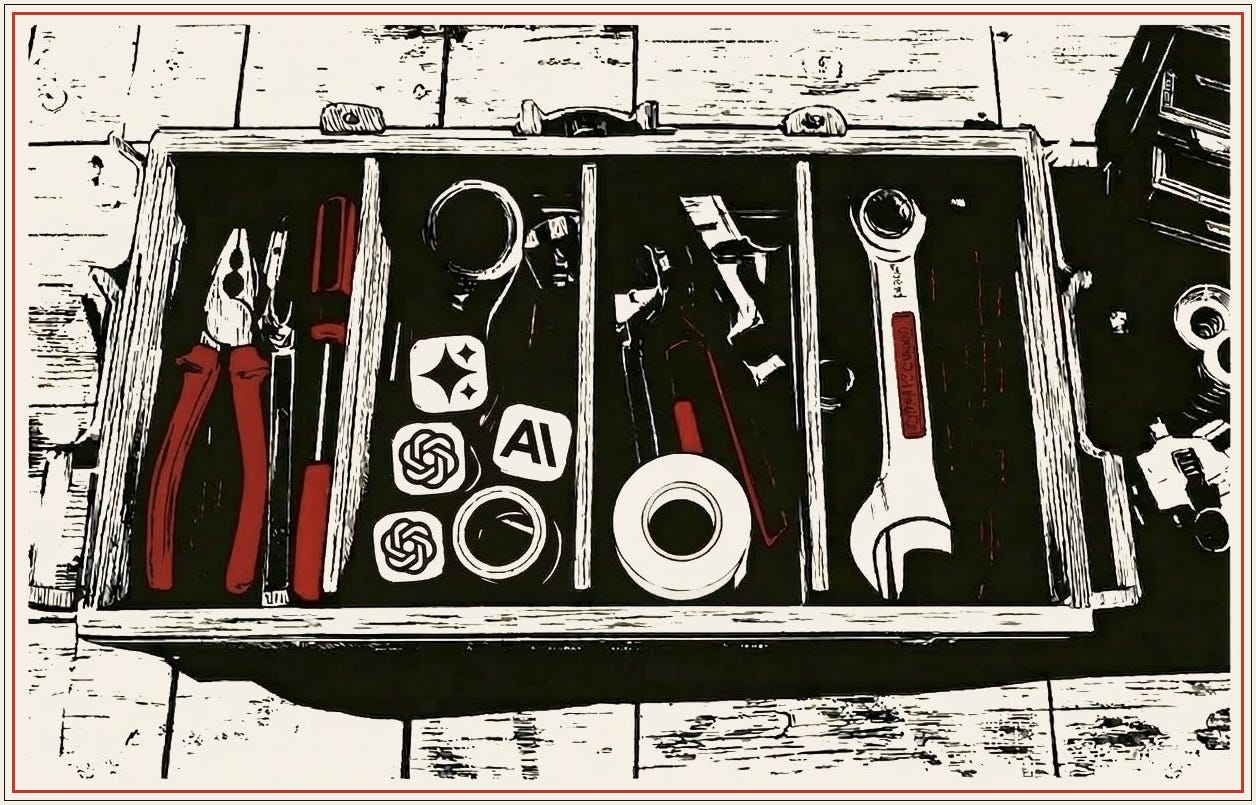

The sheer friction of the term “AI skills” is enough to prevent most people from trying. (If the term is a psy-op to keep most people out of the productivity gains, it’s working.) A reframing is in order. Here’s what actually works better (I shared this advice in “The Problem of the 99%: Why Almost No One Uses AI Well,” and I follow it religiously): take the most tedious, repetitive, soul-draining task in your current job and try to get an AI tool to do it. That is your goal.

If you do it, you will have greater AI skills than 99% of people. You’ll probably fail the first few times. That’s ok. You’ll learn more from those failures about what AI can and can’t do than from any course or tutorial. And you’ll end up with both a genuine productivity gain and a genuine understanding of where the limits are.

Don’t learn AI skills; solve one annoying problem with this new tool and see what happens.

III. Separate your finances and career from your identity

This one hits home for me because, as a writer, I’m blessed with making a living with the thing I love doing the most: my identity provides for me. That is also a curse: if I lose my income, I might lose my identity, and vice versa.

Well, not really. Even if they are intermixed, they’re separate. The financial question is purely practical: if your income changed dramatically in the next eighteen months, do you have runway? Do you have savings, do you have flexibility, do you have skills that transfer to adjacent work? How much time can you afford to not work while you prepare to pivot your career? That’s stressful to think about, but it’s a solvable problem with concrete steps.

The identity question is harder (for me at least, but I think for many people as well): if your job disappeared tomorrow, would you know who you are without it? A lot of people have fused their sense of self with their professional role so thoroughly that the prospect of losing the job feels like the prospect of losing their lives. If you let these two problems stay entangled, you get this overwhelming blob of anxiety where you can’t work on either one because they feel like the same impossible thing. Breaking problems down is 101 engineering advice, but it’s also 101 life advice.

(This is a lengthy way of saying: find a hobby and see if AI feels less threatening.)

Your bank account and your sense of self are different problems with different solutions.

IV. Pick one task you do regularly and keep it AI-free

This is the opposite of #2 and there’s a good reason for it: balance. Doing one thing fully with AI gives you a deep understanding. Doing one thing fully without AI gives you deep grounding. The paradox of AI is that you need to be more cyborg than ever and, at the same time, more human than ever.

Tell me if this sounds familiar: you’re about to write something—an email, a paragraph, a document, even a text message, hopefully not a love letter—and before you’ve started to think what to say, you’re already moving toward the chat window. You are going to ask ChatGPT instead, just for a little inspiration. You end up copy-pasting. This vice is, like all vices, a short-term pleasure and a long-term risk. I don’t say it; science does: “A Wharton Study on AI Warns of a Growing Problem: Cognitive Surrender.”

Your brain reaches for the easy route because it wants to save energy. You’re engaging in cognitive offloading (you outsource your work) or cognitive surrender (you don’t even check afterward). That Wharton study found that when participants had access to AI, they followed wrong answers 80% of the time, and their confidence went up anyway! They borrowed the machine’s confidence without checking its accuracy.

The friction of engaging the brain in tasks is what prevents it from rotting away. Choose one thing and do that thing by hand, without assistance from AI; no offloading, no surrendering, AI-free. Forever. Which task matters less than the commitment to keeping one domain where your mind does the full circuit unassisted. Just like you sometimes walk for the sake of walking despite it being slow, you should think for the sake of thinking, despite it being less productive.

You don’t stop walking because cars exist, so don’t stop thinking because AI exists.

V. It only matters what your employer believes about AI

Your opinion about AI is irrelevant.

That was the first sentence of a guide I wrote for the job-seekers: “The Job Market Doesn’t Care If You Don’t Believe in AI.” The basic idea is that the job market has already made its decision, and that decision is binding whether it’s correct or not. Your boss and the boss of your boss have thoroughly bought the AI story, even if you didn’t. You might be the smartest person around with your wariness, but it doesn’t matter if your CEO bought the story.

How many CEOs do you know who didn’t? Much of what appears on job postings as “AI skills” is familiarity dressed in intimidating jargon. “Exp with LLM-based workflows” means you’ve used ChatGPT for something repeatedly. “Prompt engineering” means you’ve learned to give clear instructions. These are names for practices that many people are already doing informally.

You can be correct that the hype has outrun reality, that most companies using AI report no measurable productivity gains, that the whole thing might be theater. You can be correct about it all and still be unemployable, because the labor market doesn’t run on truth but on supply and demand. Your fate depends on someone who’s already made up his mind.

The labor market doesn’t run on truth but on what employers believe is true; act accordingly.

VI. Always aim for competence, not mastery

This item completes the triad with #2 and #5. What “AI skills” means is competence. What your employer expects from you is competence. Competence ≠ mastery.

Plenty of white-collar workers have pressure coming from above to demonstrate that they can work with these tools. A lot of people are responding to that pressure by either resisting (“Why do I need to change how I do stuff?”), panicking (“I need to become an AI expert NOW!”) or faking it (“I’ve been using ChatGPT-5 or whatever for months”). These reactions miss something that only becomes apparent from the other side: the gap between zero and competence is much, much smaller than the gap between competence and mastery.

You don’t need to be an expert; virtually no one does. You need to be able to use the tools well enough according to a job you already know how to do. You reach this stage once you know what AI is good at, where they fall apart, and when to trust your own judgment instead, according to your job. Getting there takes weeks of regular use, not months or years of study. The people who will survive in the next few years will be the ones who got competent early and let AI become a normal part of how they work rather than a source of anxiety. (I wrote a guide for this if you’re a beginner: “Learn to Use AI Competently in 1 Day.”)

Even if you want to become a power user—someone who uses AI to its maximum value—it’d be a mistake to frame your goal as “being a power user.” You should let it happen organically, as a byproduct of your actual goal, which is either to work less for the same money or to make your job easier. Power users are rarely people who want to be labeled “power users,” just like great writers don’t pursue being labeled a “great writer.” Think about it this way: a power farmer does not obsess about crop yield, but instead simply realizes that you can attach a plowshare to an ox rather than wield a hoe.

Focus on competence rather than mastery.

VII. Learn the skill yourself first, then let AI enhance it

I wrote a short piece about “slop cannons” and “turbo brains” that went overlooked. The core insight is one that everyone intuitively knows but few apply: getting the most out of AI for a task requires the user to be, at the very least, familiar with the task. If I ask Midjourney to paint a surrealist portrait, I won’t be able to detect the mistakes that Van Gogh would catch with a glance. If I ask Codex to make a script to find commonalities among the best-performing articles of my archive, I wouldn’t be able to catch bugs.

This happens to me constantly when I read AI-generated prose. I am good at catching it because I write for a living. Directing the machine is easy; telling good from bad is not. That’s where taste and judgement come in.(This is why people prefer slop poetry and slop prose to good-quality human writing, by the way.) If you already know how to do the thing and then you add AI, you get faster and better (or perhaps you realize AI can’t improve your craft: good). Your brain enters turbo mode. If you skip learning the thing and go straight to AI, you produce a lot of stuff and can’t tell if any of it is good. You become a slop cannon.

Once you have mastered a skill, you may get lazy if you offload too much, but it’s possible to notice your growing laziness and go back. Do you think it’s a bad idea to let an experienced driver use a self-driving car? Of course not. You assume that if things go awry, he’ll take control. But allowing a kid to “drive” an FSD-on Tesla is obviously insane even if he never steers the wheel himself. Same principle here: mental arithmetic before calculators, paper books before audiobooks. Hone your skills first—learn to be a steady-handed person—and only afterward let AI enhance your work.

AI makes skilled workers prolific; it makes unskilled workers pollutants.

VIII. Know when to stop

I covered this one in “The Most Important Skill in AI Right Now: How to Know When to Stop.” You need to know when the output is good enough, when to do it yourself, when AI is eating your brain, and when the marginal improvement isn’t worth the cognitive cost.

AI creates a harmful illusion: because it can always produce more, you feel like you should always produce more. If you can now do in four hours what used to take eight, that doesn’t mean you should work sixteen-hour days because you’re 2x as productive. It means you have four hours back. But most people don’t take the four hours back. And then they wonder why they’re exhausted despite technically “doing less.”

When your tools have no natural stopping point, you have to build the stopping point yourself. You need to learn how to embrace your new powers without losing your old ones. You need to learn how to do less with more rather than more with less. We’re wired to exploit windfalls, not to exercise restraint before infinity. We’ve been given the chance to be genuinely rich: to spend more time with our loved ones, to read more books, to take more walks, to exercise, to travel, and even to do nothing but embody a gratefulness for having been born at the right time.

The hardest skill in the AI era is knowing when to stop using AI once you find it useful.

Most of you will read this guide and go back to your lives, without anxiety but also without a mindset change. That’s fine. That’s a totally natural reaction. Some of you, however, will decide to redefine how you address the coming years and the paradigm shift that AI incarnates, on top of getting rid of the anxiety. To you I say: well done, you are already halfway through the transition. May the rest feel lighter.

Thank you.

"Ai is coming and it doesn't care if you are ready or not".

Whose responsibility is that is coming unregulated?

Which countries are more responsible for this situation?

Its unseen by the great masses. Cloning would be regulated 10 times so far.

Which country would lead us to further complications by AI use? The one who gave as adventures with the subprimes and mortages of real people and AAA grades.

In 2008 the citizens lost their homes and then their jobs. In few years they will lose their jobs first.

Which country in the whole Globe prefers their companies prosper and their citizens be without a job?

And what it means the Ai is coming? The AI is coming now. We people have come from centuries with wars and pain. What is the job of political elites? The prosperity of the people or their doom?

Unfiltered, no AI participated.