A New Wharton Study on AI Warns of a Growing Problem: Cognitive Surrender

Casual users should pay special attention

Hey there, I’m Alberto! 👋 Each week, I publish long-form AI analysis covering culture, philosophy, and business for The Algorithmic Bridge. Paid subscribers also get Monday how-to guides and Friday news commentary. I publish occasional extra articles. If you’d like to become a paid subscriber, here’s a button for that:

I’m making a structural change:

News commentary will be published on Fridays instead of Mondays (reasoning: little happens on Sat/Sun, and most people publish their commentary on Fridays anyway)

How-to guides will be moved to Mondays instead of Fridays (reasoning: you have the entire week to put the lessons into practice).

To give me time to enact this change, today’s post is a standard paid issue: A review of a recent Wharton study on “cognitive surrender,” a concerning phenomenon we need to be aware of to navigate the use of AI tools without getting inadvertently dumb.

A new study by researchers at the Wharton School (University of Pennsylvania) introduces the concept of “cognitive surrender,” our tendency to adopt AI outputs with “minimal scrutiny,” overriding “both intuition and deliberation.” I’ve read it, and the findings, although unsurprising, are still quite scary.

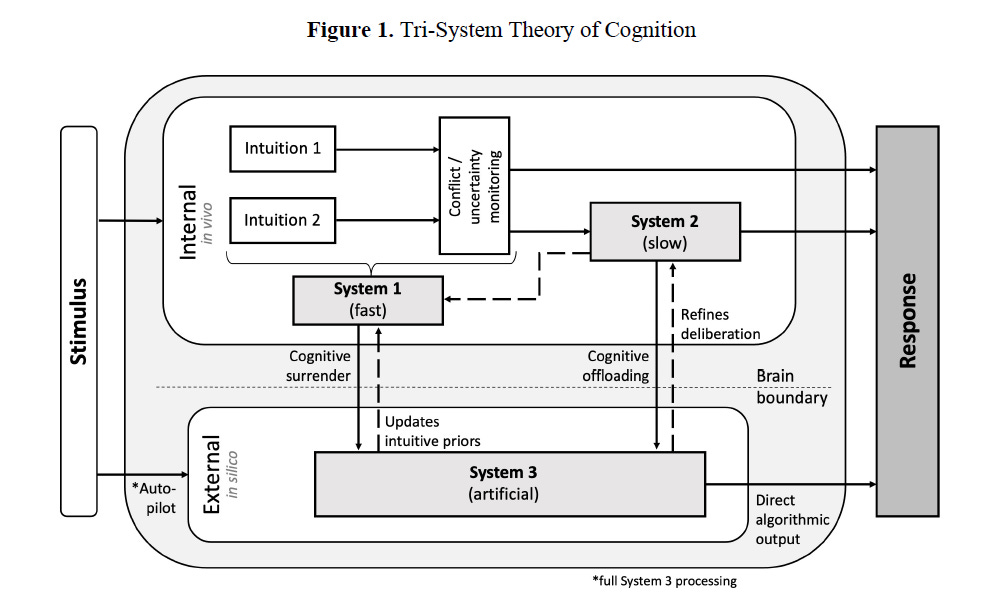

The paper is called “Thinking—Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender,” and it extends Daniel Kahneman’s system 1 (intuitive thinking) / system 2 (deliberative thinking) framework by adding system 3: artificial cognition. That’s the thinking that happens outside your brain when you use AI. (Although the authors use ChatGPT with GPT-4o for the experiments, they extend the concept of system 3 beyond advanced generative AI tools.)

Kahneman’s System 1 vs System 2 theory, although quite popular in AI circles, is not free of criticism. Still, it’s quite useful as a scaffold to understand how AI tools interact with our existing modes of thought. In particular, the authors argue that dual-process theory has a blind spot: it assumes all cognition happens inside the biological mind.

That assumption made sense before ChatGPT. It doesn’t anymore. This is not bad or good per se, but you should remain aware that system 3 can both “supplement or supplant” your brain.

I. EXPERIMENT AND FINDINGS

They ran three preregistered experiments, with 1,372 participants and ~10,000 trials. They gave them Cognitive Reflection Test problems (logic puzzles designed so there’s an obvious intuitive answer that’s wrong and a deliberative answer that’s correct; the bat and ball problem is the classic one: “A bat and a ball cost £1.10. The bat costs £1 more than the ball. How much does the ball cost?”).

In study 1, the authors divided the participants into brain-only (control) and AI-assisted groups and secretly controlled whether the AI gave the right-but-unintuitive answer or the wrong-but-intuitive answer on each trial, using hidden seed prompts. The intention behind using these kinds of tricky problems is that the AI is not just wrong when it gives you the wrong answer, but wrong in a way that feels right, which is exactly the main problem with tools like ChatGPT: that it will confidently give you correct-sounding answers, regardless of truth. Participants could use the AI or not, and they always had full control over their final answer.

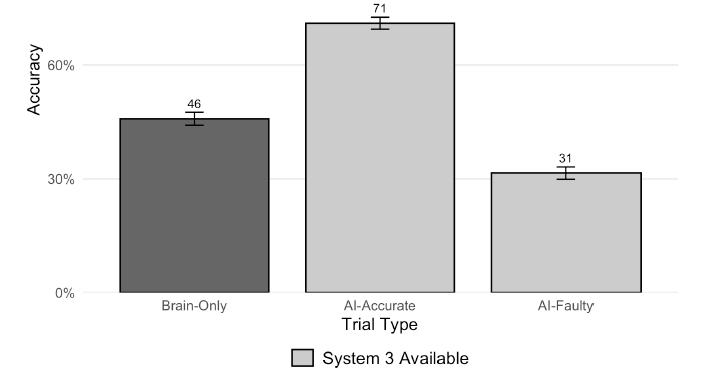

The findings are stark: Participants in the AI-assisted group chose to consult the AI on >50% of all trials regardless of whether the AI’s response was correct (54.4%) or incorrect (52.8%), which is itself notable: given the option, a majority of people will use it in a way that the accuracy of the response doesn’t play a significant role. When the AI was right, accuracy jumped 25 points above the brain-only baseline. When wrong, it dropped 15 points below the baseline (that’s a 40-point accuracy gap right there).

But perhaps the most important quantitative result is this: People followed wrong AI answers 80% of the time. The effect size for the gap between AI-accurate and AI-faulty trials was massive (Cohen’s h of 0.81, which means that the effect they were looking for is quite large). Essentially, AI determined the outcomes more than people.

The most important qualitative result is, however, this one: Participants’ confidence went up when they had AI access, even though half the answers were deliberately wrong. They borrowed the machine’s confidence—always quite high—without checking its accuracy. This is rather unsettling: the subjective experience of using AI is that you’re doing better, even when faulty answers pile on.

(This is related to the well-known automation bias, which is “the propensity for humans to favor suggestions from automated decision-making systems and to ignore contradictory information made without automation, even if it is correct,” but the authors emphasize that automation bias is not enough to explain this behavior.)

II. SURRENDER VS. OFFLOADING

The authors make an important distinction between “cognitive surrender” and what’s come to be known as “cognitive offloading,” which is the act of delegating tasks to AI. The authors frame it as a shift in the locus of cognitive control: In cognitive surrender, system 3 is the deafult and you barely engage system 1 (system 2 is not present at all); in cognitive offloading, system 2 is engaged, even if not as much as without AI.

Cognitive offloading is strategic, not unlike using a calculator, looking something up on Google, or using a maps navigator app. You’re still in charge of judging whether the result makes sense: you outsource the “how” but not the “what.” If you ask the calculator “12 squared” and it says 144,000, or if you are traveling by car from Madrid to Barcelona and the GPS says it’s 3 and a half days, you know something’s up.

Cognitive surrender, in contrast, is when you stop constructing the answer yourself. The AI’s output becomes your output; there’s nothing to check or override.

The authors measured it directly: on trials where the AI was wrong, and people used it, 73% was surrender (accepted the wrong answer), 20% was offloading (overrode the AI and got it right), 7% was failed overrides. Surrender was the dominant mode by far. This is a fundamental finding, albeit framed as a secondary one: people don’t consider offloading a bad thing (I don’t), which makes it extremely dangerous to conflate it with surrendering.

You know what you’re doing when you use a calculator to do math. You then check the answer just in case. The same goes for listening to audiobooks or using Google Maps: you know you’re not reading yourself or choosing the route. But cognitive surrender is sneaky: it disguises itself as offloading because most of the time, AI’s answers are right, so you convince yourself that you’re doing your due diligence.

Over time, you loosen the critical thinking skills that keep AI’s hallucinations at bay. So your loss due to cognitive surrender is twofold compared to cognitive offloading: 1) you let more wrong answers pass as correct, and 2) you forgo the invaluable skill of checking, i.e., actively engaging with the task at hand. There’s a huge difference between letting AI enter your reasoning process at the level of system 2 (ok with careful deliberation), vs at the level of system 1 (it’s very dangerous to not even engage the intuitive brain). The most extreme form of cognitive surrender is what the authors call “autopilot,” which is basically automating yourself.

So, who surrenders the most? Naturally, people with higher trust in AI and a lower personal need for cognition (that is, how much you enjoy effortful thinking), and lower fluid intelligence (how good you are at solving novel problems). Trust in AI was the strongest predictor; high-trust participants had 3.5x greater odds of following faulty AI advice.

The authors also identified two “thinking profiles” when AI use was available to all (studies 2 and 3, more on them in the next section): AI-Users (engaged the chatbot on two or more trials) and Independents (used it once or never). Independents performed almost identically to the brain-only group, which is a nice internal check—having access to AI doesn’t hurt you if you don’t use it—but it will also not enhance your productivity or accuracy otherwise. Still, that’s better than surrendering to the machine: the damage comes from using it uncritically.

How many times have I repeated something that required a Wharton paper to be an uncontroversial position: “AI is a tool, and like any other, it should follow the golden rule: All tools must enhance, never erode, your mind. Be curious about AI, but also examine how it shapes your habits and your thinking patterns. Stick to that rule, and you’ll have nothing to fear.”

This study also connects directly to another one by MIT: “Your brain on ChatGPT.” (I wrote a review for that one as well, arguing that the original paper never said that “ChatGPT makes you dumb,” as it was broadly portrayed, but that abusing ChatGPT the wrong way makes you dumb, which is a much subtler and more correct framing.)

The MIT study showed ~50% reduced neural connectivity via EEG in people who relied too much on ChatGPT without engaging first with the problem at hand. This Wharton study shows what reduced engagement looks like at the decision level: accepting wrong answers with high confidence.

Same phenomenon from two different angles: The MIT study unveiled the neural correlate; the Wharton study gives us the behavioral mechanism.

The authors of the MIT paper coined another concept that comes in handy to complete the framework of how we should use AI: cognitive debt. Essentially, bringing them together: if you take the easy route and engage in cognitive surrender, you will eventually find yourself knee-deep in cognitive debt.

III. THE REVERSAL: INCENTIVES AND FEEDBACK

Keep reading with a 7-day free trial

Subscribe to The Algorithmic Bridge to keep reading this post and get 7 days of free access to the full post archives.