How the AI Industry Runs on Its Own Money

It will end really well or really badly

Hey, Alberto here! 👋 Each week, I publish long-form AI analysis covering culture, philosophy, and business for The Algorithmic Bridge. Paid subscribers also get Monday how-to guides and Friday news commentary. I publish occasional extra articles. If you’d like to become a paid subscriber, here’s a button for that:

I. BUILDING CASTLES IN THE AIR

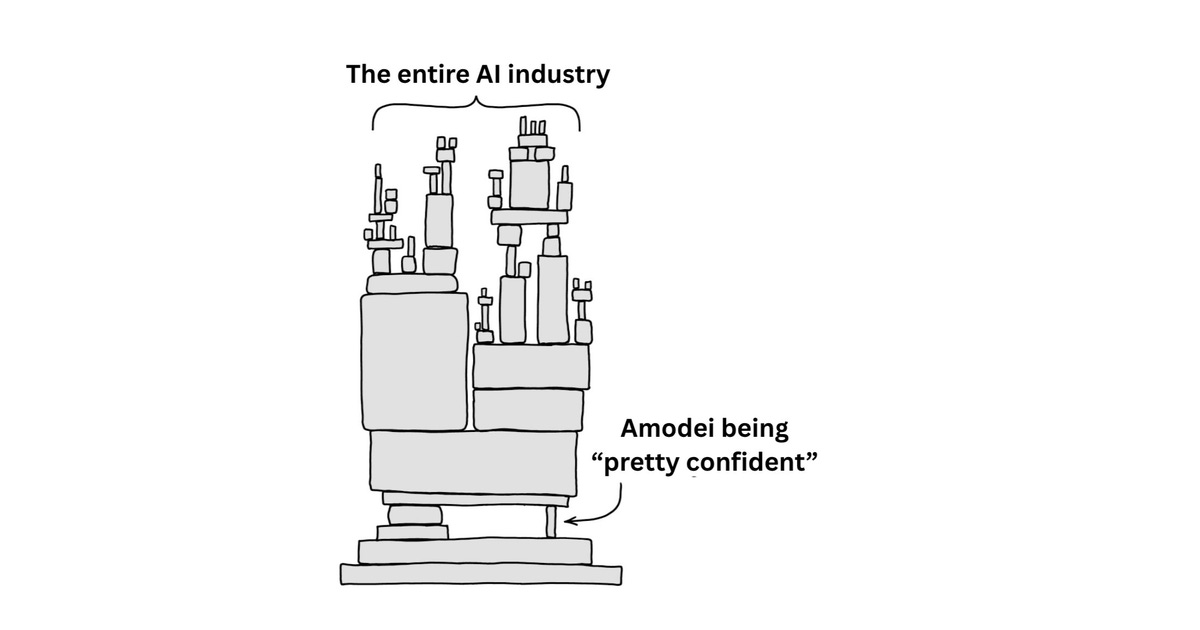

Half of the revenue backlog reported by four of the largest companies on earth comes from two startups fully dependent on the funding and patronage of those four companies. The circularity of the AI industry strikes again.

On Tuesday, The Information reported that Anthropic has committed to spending $200 billion on Google Cloud over five years. Google’s stock climbed ~2% in after-hours trading. Alphabet is, at the time of writing, the most valuable company on earth. At the same time, it is investing $40 billion in Anthropic, $30 billion of which is compute credits.

So, Google is giving Anthropic cloud credits. Anthropic commits to spending them and a lot of extra revenue on Google’s cloud. Google books that as revenue backlog. Google stock goes up. And shareholders are comfortable with Alphabet’s $190 billion capex for 2026, dedicated to running Anthropic’s models.

Two weeks before the Google announcement, Amazon announced it’s investing up to $33 billion in Anthropic, and Anthropic committed $100 billion to Amazon Web Services. In November, Anthropic committed $30 billion to Microsoft Azure. Microsoft and Nvidia responded by investing up to $15 billion in Anthropic. In total, Anthropic has committed $330B in cloud spending to three providers. Those same providers have collectively committed over $88B in equity to Anthropic.

The company’s run-rate revenue recently crossed $30 billion (close to $40 billion), which is an incredible increase since the $9 billion it had at the end of 2025. And despite the surge, its total commitments exceed a decade of the company’s current run-rate revenue.1

Now let’s do OpenAI’s spending commitments. Microsoft Azure: $250 billion through 2032. Oracle: $300 billion over five years. Amazon: $138 billion. OpenAI’s committed cloud spend exceeds $688 billion. Oracle’s cloud revenue backlog surged 438% year-over-year to $523 billion, largely driven by its relationship with OpenAI.

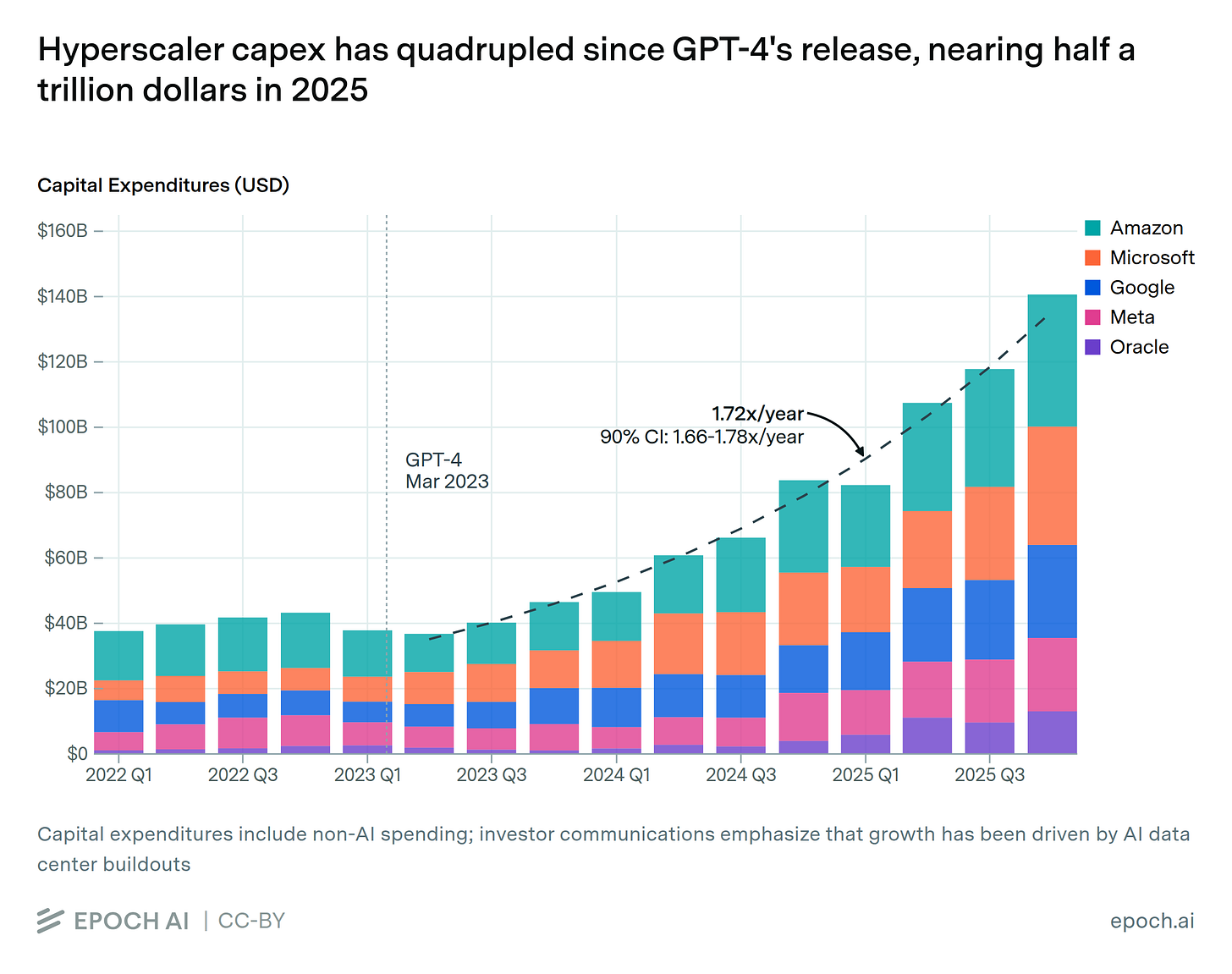

Across Microsoft, Oracle, Google, and Amazon, OpenAI and Anthropic together account for roughly half of more than $2 trillion in cloud backlog. The four hyperscalers plan to spend around $725 billion on AI infrastructure in 2026 alone, nearly twice as much as in 2025. A meaningful fraction of the demand justifying that expenditure traces back to companies whose funding traces back to the builders.

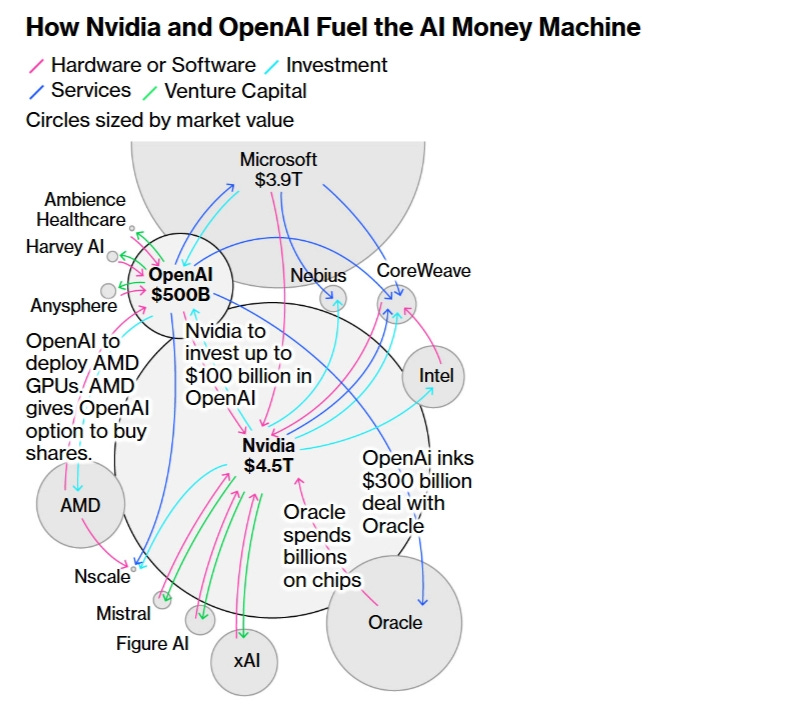

Bloomberg mapped this circular structure in October 2025 under the headline “OpenAI, Nvidia Fuel $1 Trillion AI Market With Web of Circular Deals.” At this time, Anthropic was significantly smaller in terms of investments, spending commitments, revenue, and user base, so it was excluded.2

At the time, Nvidia had invested $100 billion in OpenAI, OpenAI committed to filling data centers with Nvidia chips, Oracle spent billions on those same chips to build the data centers OpenAI contracted. Bloomberg’s assessment: “Never before has so much money been spent so rapidly on a technology that, for all its potential, remains largely unproven as an avenue for profit-making.” Morningstar analyst Brian Colello said: “If things go bad, circular relationships might be at play.”

The same story repeats over and over.

AI is, effectively, an industry built on unproven promises and circular deals.

But what’s so bad about that?

II. THEY’RE CONFIDENT IT WILL WORK OUT

At a Summit in December, Anthropic CEO Dario Amodei offered a reasonable defense from inside the loop. “One player has capital and has an interest, because they’re selling the chips,” he said, “and the other player is pretty confident they’ll have the revenue at the right time, but they don’t have $50 billion at hand.” Amodei’s “pretty confident” is the one piece holding the entire AI industry. Like so:

Is his confidence supported by the data?

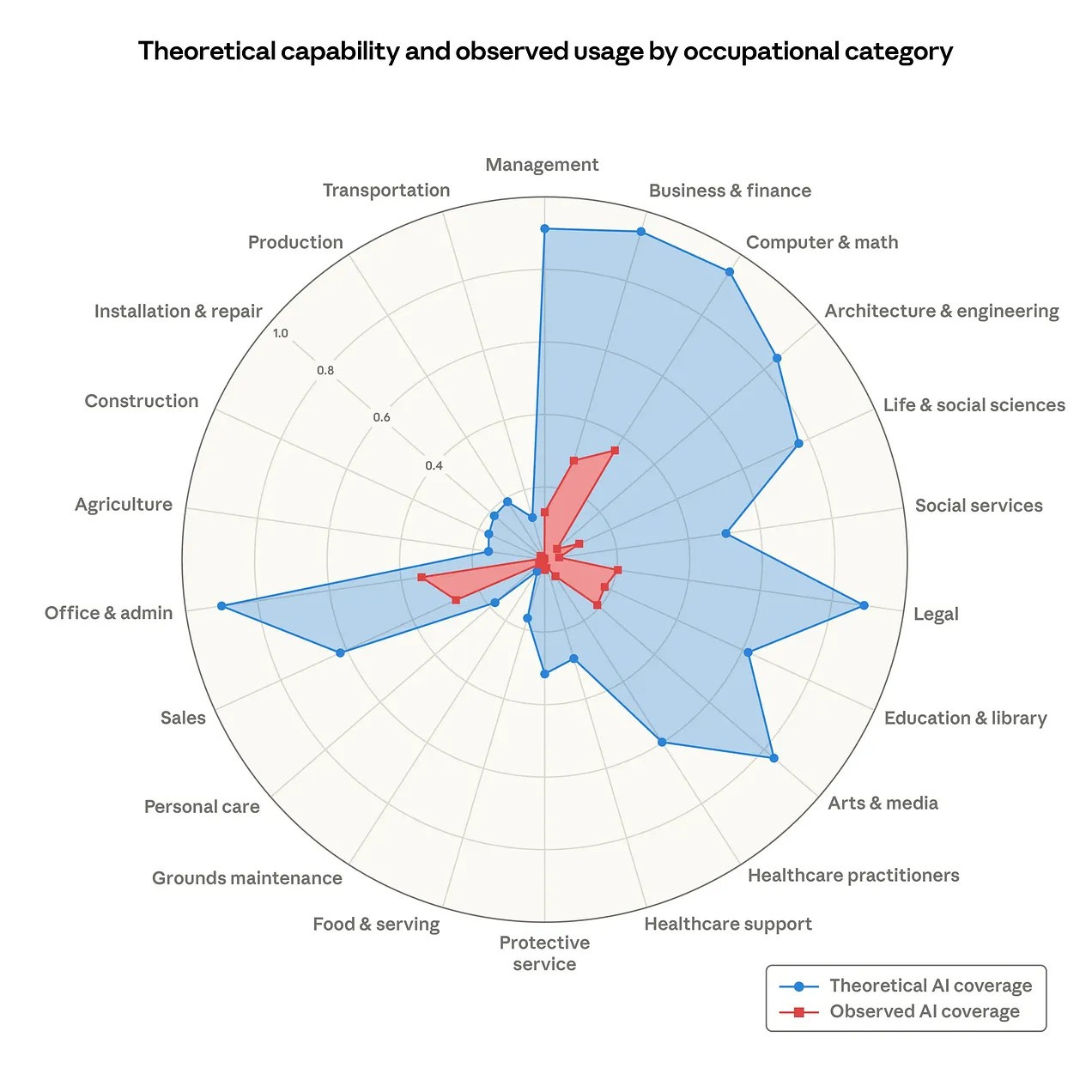

In March 2026, Anthropic published a report titled “Labor market impacts of AI: A new measure and early evidence.” They measured jobs’ “observed exposure” to AI. That is, how the theoretical capability of AI models compares to real-world usage data. Here’s what they found:

Blue is what AI could theoretically do. Red is what is actually doing right now. With that in mind, this chart can be interpreted in two ways; one leads to the high confidence Amodei displays, but there’s another that reveals these circular deals could be a serious mistake. You can take the color gap as evidence of how much room AI has to grow and diffuse throughout the economy (that’s Amodei’s interpretation and, I assume, of the broader industry).

Or—you can take the gap as evidence of the opposite; as a diagnosis of AI’s limitations in the real world, not reflected on laboratory tests. That is, AI is the red because the blue is not real.

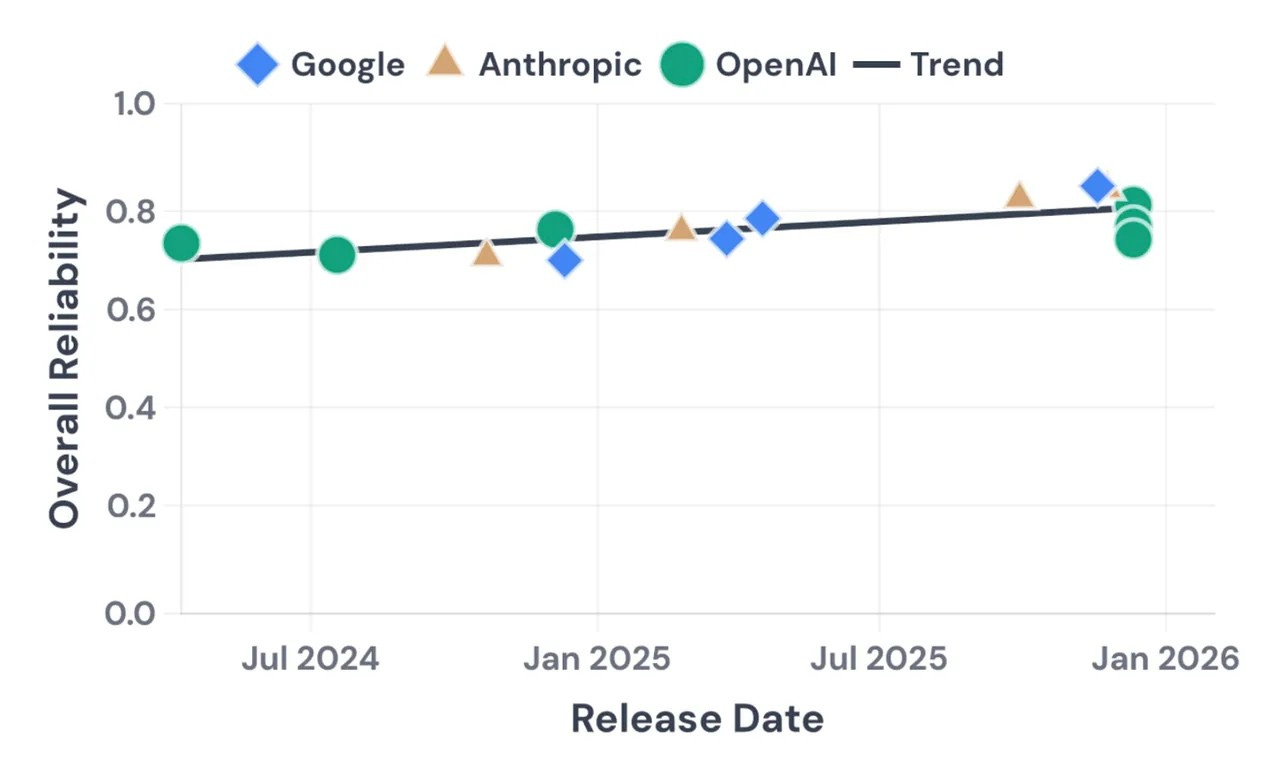

My reading is a middle point between the two, and here’s what I’m afraid of: The AI industry is handwaving the many frictions by which red would, in the best-case scenario, become blue. It could happen through deeper and better deployment, or it may not happen at all because AI models are not growing as fast in reliability as they grow in capability.

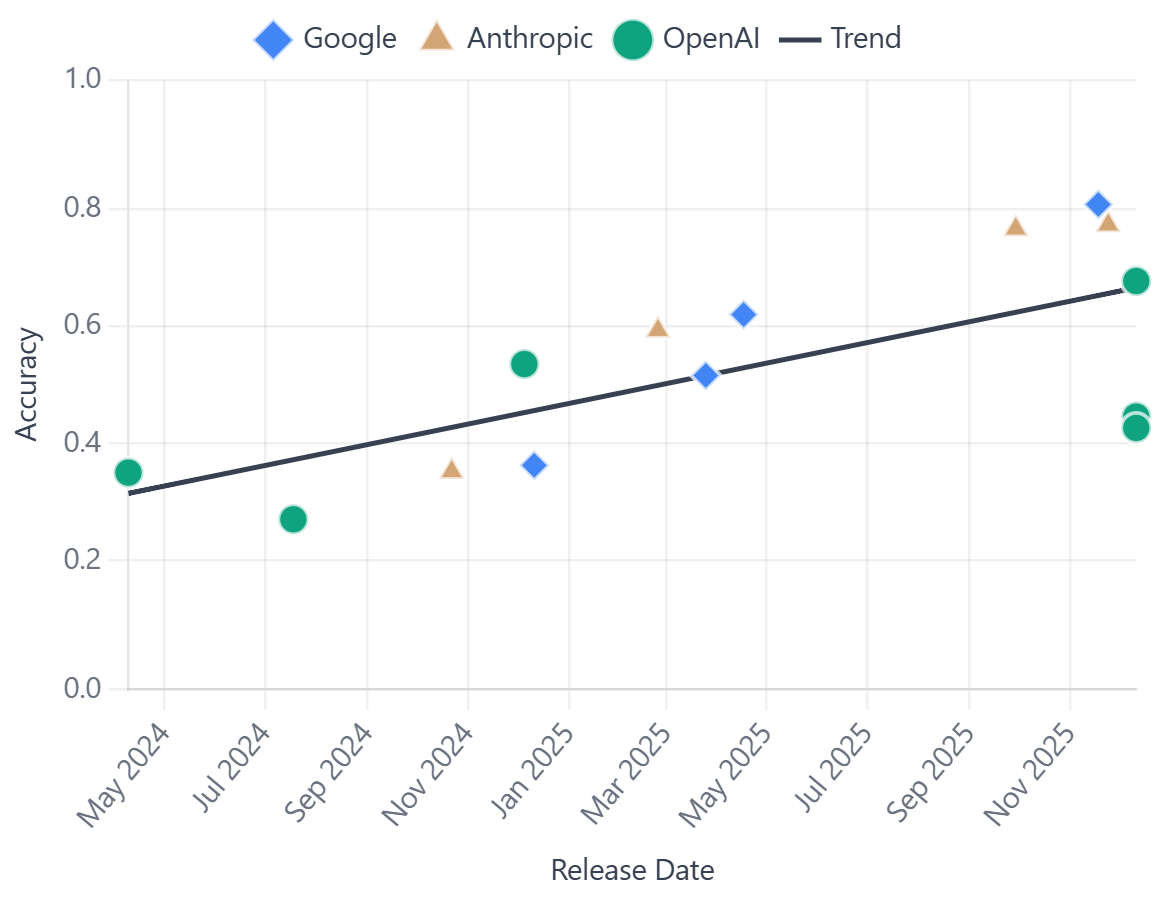

That’s exactly what a paper on AI agent reliability by Kapoor, Rabanser, and Narayanan, published in February, found (I covered it somewhere else). Here’s my summary: “Across 14 models from OpenAI, Google, and Anthropic (that is, frontier models), spanning 18 months of releases, they found that capability improved substantially while reliability improved only modestly, if at all.” Compare the slopes of the two trends below, first reliability and then accuracy.

Anthropic’s report is concerningly thin in explanations for the red-blue gap: “model limitations, . . . legal constraints, specific software requirements, human verification steps, or other hurdles.” As I wrote back then, they treat these as “temporary frictions,” merely speed bumps on the road to AI supremacy. But the evidence we have—including evidence I’ve been tracking, which I shared in a recent guide on this exact topic—suggests something worse than a diffusion curve: AI is a cliff. People are not using AI nearly as much as they should by now.

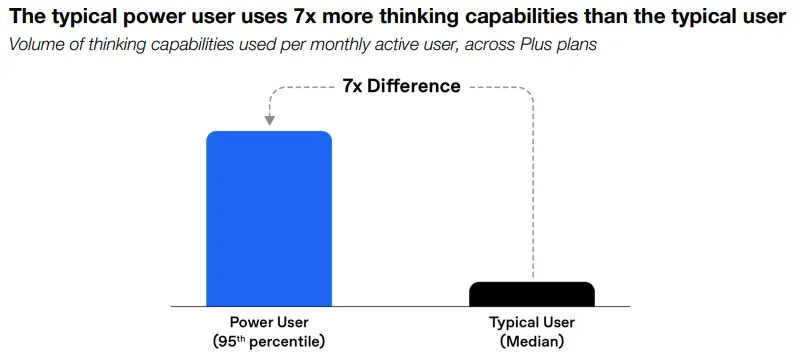

OpenAI published a report in January where they show that a “power user” uses the “thinking capabilities” of AI models seven times as much as the median paying user. Meaning, people who already use ChatGPT, who already invest in it, are using it an order of magnitude less than the users who are getting the most value out of AI. The typical median user knows how AI works; they use it all the time. They just… don’t find it as useful or reliable as to deploy it further.3

Current growth and revenue projections are unprecedentedly good for both Anthropic and OpenAI, but we also know that, on the one hand, power users change AI provider every couple of months (which means that, using run-rate revenue, OpenAI and Anthropic are counting some users more than once), and, on the other hand, AI revenue can increase or decrease over time depending on whether clients and consumers manage to make a return on their investment.

So far, Anthropic and OpenAI are meeting revenue targets, but here’s the truth: A product that’s highly attractive and worth its price would have the same revenue profile as a product that’s highly attractive and unreliable at the same time. We just don’t know which one AI will be next year.

The circularity is, in other words, the financing mechanism for a technology too expensive for any single company to build alone and too unreliable as to be applied to real-world tasks to the degree that its theoretical capabilities would suggest.

I don’t dispute the fact that the “circularity” is both legal and logical—and can be good if it works out!—in the sense Amodei claims. What I dispute is whether his confidence is justified.

III. WHAT HAPPENS NEXT

The AI industry needs one thing to survive: that the run-rate revenue they claim transforms into actual revenue. Which will only happen if enterprise clients and individual consumers outside the loop find it worth paying for AI products at scale in the long run. That’s the only metric that can tell us something about the near-term future of the AI industry.

Turns out that, beyond Anthropics’ and OpenAI’s own usage measurements, beyond independent reliability benchmarks, beyond deals, projected revenues, spending commitments, and infrastructure CapEx—beyond all of that, the data from the real world tells two conflicting stories.

Keep reading with a 7-day free trial

Subscribe to The Algorithmic Bridge to keep reading this post and get 7 days of free access to the full post archives.