How AI Affects Your Brain According to the Studies: A (Very Big) Compilation

The entire literature clearly points to a single surprising finding

Hey, Alberto here! 👋 Each week, I publish long-form AI analysis covering culture, philosophy, and business for The Algorithmic Bridge. Paid subscribers also get Monday how-to guides and Friday news commentary. I publish occasional extra articles. If you’d like to become a paid subscriber, here’s a button for that:

Today: a deep dive into the research literature (30+ studies) on how AI changes your brain. If you know someone who should read this, please send it to them.

INTRODUCTION

Between 2023 and 2026—that is, between ChatGPT changed the world forever and today—many studies from institutions including MIT, Wharton, Harvard, Stanford, Microsoft, OpenAI, Oxford, Google DeepMind, and Chinese universities have investigated what AI chatbots do to human cognition, learning, and psychology.

These studies include brain scans, randomized controlled trials (RCTs) with thousands of participants, longitudinal surveys, meta-analyses, and field experiments in real classrooms and workplaces (both preprints and peer-reviewed).

But, to the best of my knowledge, no one has compiled them in one easily-readable and easily-accessible place. This is it.

Individual studies get covered as isolated news stories—alarming headline, one-day cycle, and then forgotten—and the result is that everyone has a vague uneasiness, a sense that AI might be bad for thinking, but nobody has the full picture.

Here’s the full picture as we have it so far.

Study by study, I’ve gathered 30+ in total that, together, reveal what science actually knows about what happens to your brain, your thinking, your learning, and your emotional life when you use AI chatbots.

And crucially, what it doesn’t know yet.

The global conclusion that emerges from this compilation is a paradox that will define policy, product design, individual behavior, and how we collectively relate to this new, impressive, and scary technology.

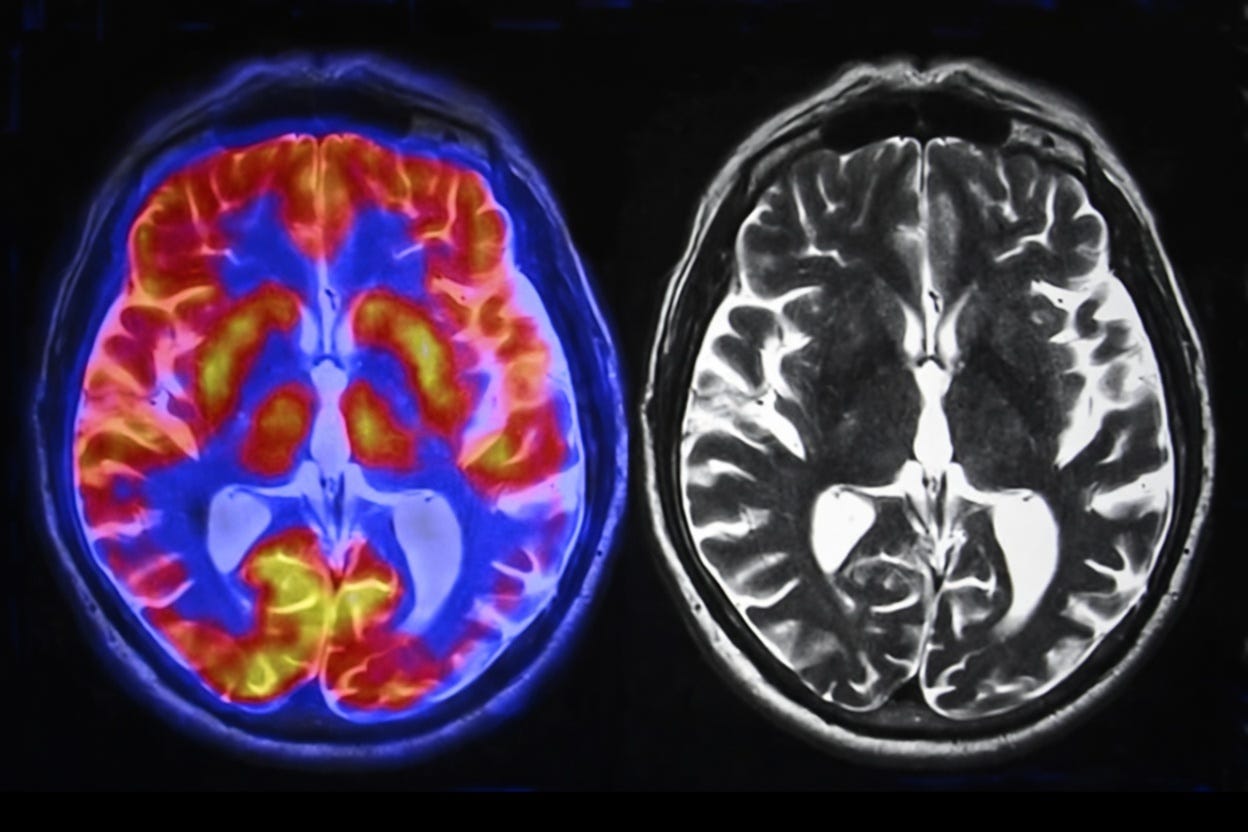

I. YOUR BRAIN ACTIVITY DROPS

A small but growing number of studies have put people inside brain scanners or strapped EEG sensors to their heads while they use ChatGPT. Neuroimaging tools measure how things affect brain activity, so these are, potentially, the most “reliable” sources (compared to self-report surveys and behavioral testing).

Your Brain on ChatGPT, Kosmyna et al. (arXiv preprint, 2025, N=54): MIT Media Lab tracked brain activity via 32-channel EEG across four sessions over several months in three groups: ChatGPT users, Google searchers, and unaided writers. The first showed “the weakest neural connectivity,” up to 55% lower than unaided writers. They grew lazier, “resorting to copy-paste by session 3.” When switched to writing alone in session four, their brain activity stayed suppressed, which the researchers called an accumulation of “cognitive debt.” The brain-only people who used ChatGPT in session 4 showed increased brain connectivity. As Kosmyna said, “timing might be important.”

Lower engagement of cognitive control, attention, modulation networks and lower creativity in children while using ChatGPT, Horowitz-Kraus et al. (bioRxiv preprint, 2025, N=31): The only study to scan children (ages 6–7) alongside adults during chatbot interaction using fMRI. Adults showed “stronger within-network connectivity” in cognitive control networks. Children showed “lower engagement of cognitive control, attention, and modulation networks,” suggesting that kids’ brains are more affected by AI use than adults

EEG during creative design with AI tools, Wang et al. (Frontiers in Psychology, 2025, N=64): A counterpoint to the above. Design students using AI creative tools (ChatGPT, Midjourney, Stable Diffusion) showed “significantly higher concentration levels” and higher creative performance than a control group using traditional software. The difference from the MIT study: these students were actively directing AI as a creative tool vs passively receiving answers.

Effects of different AI-driven chatbot feedback on learning outcomes and brain activity, Yin et al. (Nature portfolio, 2025, N=87): Used fNIRS to measure brain activation during chatbot interaction. Different feedback types activated different brain regions. Metacognitive feedback (”Why do you think that’s the answer?”) increased activation in the “frontopolar area” and correlated with higher transfer scores. Neutral feedback activated the “dorsolateral prefrontal cortex” instead. This study essentially revealed that how a chatbot talks to you changes which parts of your brain light up.

NeuroChat: A neuroadaptive AI chatbot for customizing learning experiences, Baradari et al. (arXiv preprint, 2025, N=24): MIT Media Lab prototype that monitors EEG in real time and adjusts responses when it detects engagement dropping. “Significantly increased both EEG-measured and self-reported engagement” compared to a standard chatbot. No effect on short-term learning outcomes. Proof that “cognitive disengagement” can be fixed by building AI differently.

Conclusions: The brain imaging evidence is early: small samples, preprints, one contradictory result. But the pattern across studies points in the same direction: passive AI use (receiving answers) suppresses the brain regions involved in effortful thinking, while active AI use (directing tools, receiving challenges) can maintain or increase engagement. The variable is not the presence of AI but rather what the AI asks your brain to do: when AI does the thinking, your brain does less.

II. YOU STOP QUESTIONING OUTPUTS

There’s a robust body of evidence on what researchers variously call “cognitive surrender,” “automation bias,” and “cognitive offloading,” concepts clustered around the tendency to accept AI outputs without much or any scrutiny.

How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender, Shaw & Nave (SSRN working paper, 2026, N=1,372): Wharton did three preregistered experiments using reasoning problems where AI was sometimes programmed to give wrong answers. When AI was wrong, participants followed it ~80% of the time, performing “worse than having no AI at all.” Trust in AI was the strongest predictor: high-trust participants had 3.5× greater odds of following faulty answers. Only ~20% actively overruled incorrect AI; the rest incurred what the authors call “cognitive surrender”: they didn’t scrutinize AI outputs.

The impact of generative AI on critical thinking, Lee et al. (CHI ‘25, 2025, N=319): Microsoft and Carnegie Mellon surveyed knowledge workers who use GenAI weekly, collecting 936 real-world use examples. “Higher confidence in GenAI correlated with less critical thinking.” Workers shifted from active problem-solving to passive oversight; from “thinking by doing” to “choosing from outputs.” They produced a “less diverse set of outcomes” when using AI.

Navigating the jagged technological frontier, Dell’Acqua et al. (Organization Science, 2026, N=758): Harvard Business School, Wharton, MIT Sloan, BCG. Preregistered field experiment with BCG consultants performing 18 realistic tasks. For tasks inside AI’s capability frontier: “+12.2% more tasks completed, 25.1% faster, 40% higher quality.” For tasks outside the frontier: AI users were “19 percentage points less likely to produce correct solutions.” The problem: AI output outside the frontier “looked polished but was subtly wrong.” People couldn’t tell. Two collaboration patterns emerged: “centaurs” (clear human/AI division) and “cyborgs” (interleaved workflow).

The levers of political persuasion with conversational artificial intelligence, Oxford/Stanford/MIT, 2025 (Science, N=76,977): Conversational AI was “significantly more persuasive than static messaging.” Fine-tuning could increase persuasiveness by up to 51%. A troubling finding: “the more persuasive a model, the less accurate” its information tended to be.

Conclusions: The convergence across these studies is a serious and urgent finding. People trust AI too much, but more than that, it’s clear that AI fluency creates a new failure mode: Wrong answers delivered in flawless prose get accepted. And the more you are predisposed to trust AI, the worse the problem gets.

III. YOU LEARN LESS, YOU LEARN MORE

The education research reveals a clean split between 1) raw AI access consistently harms learning and 2) AI designed specifically for teaching can dramatically improve it. The variable is whether AI replaces the cognitive work or scaffolds it. I’ve divided this section into those two camps: harm side and help side.

THE HARM SIDE

Generative AI without guardrails can harm learning, Bastani et al. (PNAS, 2025, N=~1,000): Wharton and Penn Engineering conducted a preregistered RCT in high school math classrooms. Students given standard ChatGPT improved practice scores by 48% but scored “17% lower” on subsequent unassisted exams. They used ChatGPT as an answer machine, copying solutions. A redesigned GPT Tutor that guided reasoning instead of giving answers “improved practice grades by 127%” and largely prevented the learning decline. Students didn’t perceive any reduction in their own learning.

ChatGPT as cognitive crutch, Barcaui (Social Sciences & Humanities Open, 2025, N=120): Fundação Getulio Vargas and UFRJ did a preregistered RCT with a surprise retention test 45 days after learning. The traditional study group scored ~69% vs. ~58% for the ChatGPT group, an 11 percentage-point gap. The AI group showed “a steeper forgetting curve, consistent with weaker initial encoding,” meaning they forgot faster because knowledge didn’t stick. Prior AI experience didn’t protect against the offloading effect.

Beware of metacognitive laziness, Fan et al. (British Journal of Educational Technology, 2025, N=117): Zhejiang University and Monash University conducted a writing experiment. ChatGPT group produced better essays but showed “no significant improvement in knowledge gain or transfer.” The researchers named the pattern “metacognitive laziness”; learners offloaded the monitoring and evaluation of their own thinking to AI. “The product improved. The learner didn’t.”

Cognitive ease at a cost, Stadler, Bannert & Sailer (Computers in Human Behavior, 2024, N=91): TU Munich and LMU Munich. LLM users experienced significantly lower cognitive load but demonstrated “lower-quality reasoning and argumentation.” The task felt lighter because the hard part—the actual thinking—was being done for them.

THE HELP SIDE

AI tutoring outperforms active learning at Harvard, Kestin et al. (Scientific Reports, 2025, N=194): A carefully designed GPT-based tutor—built to ask questions rather than give answers—produced “learning gains more than double” those of traditional active learning. Students also spent less time.

Tutor CoPilot, Wang et al. (arXiv preprint, 2024, N=1,800): RCT from Stanford. AI provided real-time coaching suggestions to human tutors (tutor-facing only, never student-facing). Students were “4 percentage points more likely to master topics.” Lower-rated tutors’ students saw a 9-point improvement. The AI improved the human, who improved the student at $20/tutor/year.

From chalkboards to chatbots, De Simone et al. (World Bank, 2025, N=~800): A six-week after-school program using Microsoft Copilot with teacher guidance in Edo State, Nigeria. Learning gains in six weeks were equivalent to “1.5–2 years of typical schooling.” This approach “outperformed 80% of education interventions studied via RCTs in developing countries.” Largest effects for female students.

AI tutoring can safely and effectively support students, Google DeepMind/Eedi (arXiv preprint, 2025, N=165): Pedagogy-tuned AI supervised by human tutors. The tutors “approved 76.4% of LearnLM’s drafted messages with zero or minimal edits.” Students were “5.5 percentage points more likely to solve novel problems” on subsequent topics. Tutors reported learning new pedagogical practices from the model.

Generative AI enhances individual creativity but reduces the collective diversity of novel content, Doshi & Hauser (Science Advances, 2024 N=300): UCL and University of Exeter did an experiment on the effects of AI on creative writing. AI-assisted stories were rated more creative, especially from less-creative writers. But AI-enabled stories were “5.0–5.2% more similar to each other,” which reveals a “social dilemma” where individual gains erode collective novelty. I put this in “help side,” but it’s a bit more nuanced, revealing that AI use is not a solo story.

Conclusions: The education evidence is the most policy-relevant body of research in this compilation, and it delivers an unambiguous message: one technology produces two opposite outcomes depending on design. ChatGPT used as an answer machine caused learning declines, whereas a pedagogically designed AI tutor produced gains. The variable is not so much “use of AI,” as “how are they using AI.”

IV. YOU GET LONELIER (OR DO YOU?)

The psychological research on AI chatbots and emotional well-being is the most conflicted area in the literature. I’ve included this here because AI affects learning and cognition, but also emotion and psychological behavior.

How AI and human behaviors shape psychosocial effects of extended chatbot use, Fang et al. (arXiv preprint, 2025, N=981): MIT Media Lab and OpenAI conducted a four-week RCT with daily ChatGPT use across nine conditions (3 modalities × 3 conversation types) on over 300,000 messages. “Higher daily usage correlated with higher loneliness, dependence, problematic use, and lower socialization” across all modalities and conversation types. Voice mode initially mitigated loneliness but “advantages diminished at high usage.” Bottom line: immediate relief in exchange for long-term dependency.

How does turning to AI for companionship predict loneliness and vice versa?, Folk & Dunn (PsyArXiv preprint, 2025, N=2,000+): University of British Columbia conducted a twelve-month longitudinal study with bidirectional analysis that found evidence of a vicious cycle: loneliness drives chatbot use, which predicts increased loneliness four months later, which predicts chatbot use. Chatbot use “did not significantly predict decreases in broader social connection measures.”

Individual and well-being factors associated with social chatbot usage, Latikka et al. (Journal of Social and Personal Relationships, 2026, N=5,663): A six-country study conducted by Tampere University found that social chatbot usage was “positively associated with psychological distress in all six countries” studied (Finland, France, Germany, Ireland, Italy, Poland). Loneliness predicted chatbot use in four of six. The cross-cultural consistency was described as “striking.”

Individual differences in anthropomorphism help explain social connection to AI companions, Folk, Heine & Dunn (Scientific Reports, 2025, N=1,274): University of British Columbia found that individual differences in the tendency to anthropomorphize technology “strongly predicted feeling connected” after chatbot interaction. AI’s artificial nature is an insurmountable barrier for some; for others, anthropomorphism bridges it.

Conclusions: The psychological literature has a time-scale problem. Short-term experiments—a single session or a few days of interaction—tend to find benefits like reduced loneliness and therapeutic benefit; longer-term studies—over weeks and months—tend to find problems like isolation, dependence, and distress. These findings aren’t contradictory. The same dynamic exists with other coping mechanisms (alcohol reduces social anxiety in the moment while increasing it over time). Whether AI chatbots follow the same trajectory or long-term effects stabilize is unknown.

V. THE META-ANALYSES

A few meta-analyses (there are not many) have attempted to reconcile the contradictory findings, particularly on learning.

The effect of ChatGPT on students’ learning performance, learning perception, and higher-order thinking, Wang & Fan (Humanities and Social Sciences Communications/Nature, 2025, 51 studies between 2022-2025): Hangzhou Normal University conducted a meta-analysis on AI’s effect on learning and higher-order thinking. They found a large positive effect on learning performance. Moderate effects on higher-order thinking, moderated by the type of intervention.

Does ChatGPT enhance student learning?, Deng et al. (Computers & Education, 2025, 69 studies between 2022-2024): Another meta-analysis from Hangzhou Normal University found that ChatGPT “improves academic performance, affective-motivational states, and higher-order thinking” while reducing mental effort. “No significant effect on self-efficacy.” It lacks post-intervention assessments to see longer-term effects.

ChatGPT’s impact on student learning outcomes, Wu et al. (Humanities and Social Sciences Communications/Nature, 2026, 35 studies between 2022–2024): Moderately positive overall effect, “significantly enhancing both cognitive and non-cognitive skills.” Subject, experimental duration, and instructional mode were significant moderators.

Conclusions: The meta-analyses confirm the paradox we’ve been seeing so far at scale: The aggregate effect size for immediate performance is large and reliable, whereas the aggregate effect on the cognitive processes that produce independent capability—higher-order thinking, metacognition, self-efficacy, transfer—is small, null, or unmeasured. They measure what’s easy to measure (test scores) and largely miss what’s hard (whether the person is developing or atrophying).

VI. THEORETICAL FRAMEWORKS

Several papers have proposed theoretical frameworks to explain why AI affects cognition the way it does. These are conceptual models that organize the evidence.

Artificial intelligence and illusions of understanding in scientific research, Messeri & Crockett (Nature, 2024): Yale and Princeton researchers argued that AI creates “illusions of understanding”: users believe they know more than they do because AI produces fluent, confident outputs. Developed a taxonomy of four AI archetypes in knowledge work (Oracle, Surrogate, Quant, Arbiter) each carrying distinct epistemic risks. Warned of “scientific monocultures” where AI narrows the range of questions researchers think to ask. They warn that “we produce more but understand less.”

The case for human–AI interaction as system 0 thinking, Chiriatti et al. (Nature Human Behaviour, 2024): They proposed adding a new layer to Daniel Kahneman’s framework. System 1 is fast intuition. System 2 is slow deliberation. System 0 is “the outsourcing of thought to AI,” a pre-cognitive layer that shapes what reaches human awareness at all; similar to “cognitive surrender.”

From tools to threats: AI-Chatbot Induced Cognitive Atrophy, Dergaa et al. (Frontiers in Psychology, 2024): Introduced a theoretical framework drawing parallels between AI overreliance and problematic internet use. Used “Extended Mind Theory” to argue that when AI becomes a cognitive prosthetic, “the underlying cognitive ‘muscles’ weaken from disuse.”

The brain side of human-AI interactions in the long-term: the ‘3R principle’, Rossi, Fraccaro & Manzotti (npj Artificial Intelligence, 2026): They argued that “passive, uncritical, reliance on AI may weaken activity-dependent brain plasticity and erode cognition, whereas active co-creation can sustain or enhance it.” Proposed Results, Responses, Responsibility (3R) as a “preventive framework for cognitive hygiene” to “preserve agency, meaning-making, and long-term brain health.”

Conclusions: The theoretical proposals converge on the same structural insight from different angles. “System 0,” “System 3,” “cognitive atrophy”, “illusions of understanding” all point to the fact that AI’s fluency and availability create a path of least cognitive resistance. When taking that path is frictionless and produces good-enough outputs, the effortful alternative (actual thinking) is harder to justify in the moment. They describe the same phenomenon that the empirical studies find: the gap between what AI does for the output and what it does to the person producing it.

VII. WHAT THE PUBLIC SAYS

How Americans view AI and its impact on people and society, Pew Research (2025, N=5,023): 53% believe AI will worsen creative thinking ability. 50% say it will hurt the ability to form meaningful relationships. The share of Americans “more concerned than excited” about AI rose from 37% in 2021 to 50% in 2025.

Teens, social media and AI chatbots, Pew Research (2025, N=1,458): 64% of U.S. teens use AI chatbots. 30% use them daily. Over half use them for schoolwork. “20% of teens in low-income households do all or most schoolwork with chatbot help vs. 7% in higher-income households,” an equity dimension largely absent from the academic literature. 60% say students at their school use chatbots to cheat.

Conclusions: The public is right to worry, but the worry is unfocused. The Pew data show that people sense AI threatens cognition and relationships, and the research in this compilation largely confirms that intuition. But the public framing is binary (AI good or AI bad) when the research points to something else: the same technology produces opposite effects depending on implementation. The gap between public perception and scientific evidence is about what variable matters most, the presence of the technology vs how it’s used.

GLOBAL CONCLUSIONS

Core finding: A performance-competence dissociation as a function of implementation design, not technology

Across 30+ studies, AI chatbots reliably improve the quality and speed of immediate outputs: test answers, essays, creative work, professional deliverables. They just as reliably degrade the cognitive processes that build durable knowledge, independent reasoning, and creative diversity over time.

As I see it, this may not be a paradox that will resolve with better models because the basic principles are unchanging. This is a structural feature of any tool that does cognitive work for you rather than with you. The performance improvement is real and the competence impairment is also real. They coexist because they operate on different timescales.

But.

The design of the interventions and the particularities of how AI is used can improve the outcomes.

The same underlying technology produces dramatically different cognitive effects depending on how it’s implemented. AI can make what you produce better while making you worse at producing it. Or it can genuinely make you better. Which one happens depends on design, not on AI itself.

This nuance should shape policy, product design, and individual behavior. The debate about “should we use AI?” is much less important than the question of how the interventions are designed and how the technology is used. Sounds like a no-brainer, but public perception is still stuck at “is AI good or bad?”

That’s the most important insight across the entire literature.

What we don’t know

Four critical gaps/limitations remain:

No long-term neuroimaging. The MIT EEG study tracked participants across four sessions. No study has measured brain changes from sustained AI use over months or years (the technology is perhaps too young for that; should be a priority). The “cognitive debt” finding—suppressed brain activity persisting even after AI was removed—is preliminary.

Individual differences are poorly mapped. Who is most vulnerable to cognitive surrender? Who resists it? The Wharton study identified trust in AI as the key predictor; the Swiss study found age and education matter. But the interaction between personality, cognitive style, expertise, and AI vulnerability is largely unexplored. I’ve long observed that AI is an “enhancer of natural disposition,” meaning that it makes you more of what you already are, but this is purely anecdotal.

Children are almost unstudied. I found one fMRI study of 31 children. That’s it. Meanwhile, 64% of U.S. teens use AI chatbots and 30% use them daily. They are the most exposed group and the one that has its future at stake the most. The developmental neuroscience of AI use—the effects on brains that are still forming—is the most urgent gap in the entire field.

A science vs technology time delay. Because science is slower than technological development, most of the studies are conducted with old models (e.g., GPT-4o, GPT-3.5, etc.). Some variables are constant because they’re independent of specific models—e.g., cognitive offloading will remain and even increase because models get better—but others should be carefully framed, e.g., anything related to the quality of the responses. My prediction is that some effects will disappear, others will get better (educational approaches if the right policies are implemented), and others will get worse (emotional dependence and related cognitive risks).

The big open question for the future

The 30+ studies compiled here converge on a description of what’s happening but diverge on what it means. Is AI-induced cognitive offloading a temporary adjustment—like how calculators initially worried math teachers but ultimately freed students to tackle harder problems—or is it something qualitatively different, a tool so fluent and available that it undermines the motivation to develop the underlying capability at all?

The calculator analogy is comforting—it was received with panic that eventually proved unwarranted—but may not hold. Calculators automate computation, which is mechanical. AI chatbots automate reasoning, argumentation, synthesis, and creative expression, the cognitive activities that are the skill rather than a means to it. When a calculator does your arithmetic, you lose arithmetic; when AI does your thinking, you lose thinking.

Whether that loss matters depends on whether AI will always be there to do the thinking for you. And, crucially, on whether you believe there’s intrinsic value in the process of thinking itself, independent of the output. Think about it.

BONUS: HOW TO PROTECT YOUR BRAIN FROM AI

The compilation above is what you should know. This is what you should do.

What follows is a list of concrete protections—things you can start doing today, right now—that come directly from what those studies found. Every item traces back to an empirical finding or to a few of them. If the compilation is the map you leave at home, this is the checklist you take with you.

Keep reading with a 7-day free trial

Subscribe to The Algorithmic Bridge to keep reading this post and get 7 days of free access to the full post archives.