The Job Market Doesn’t Care If You Don't Believe in AI

Skeptics risk being unemployable and most don’t know it yet

Hey there, I’m Alberto! 👋 Each week, I publish long-form AI analysis covering culture, philosophy, and business for The Algorithmic Bridge. Paid subscribers also get Monday news commentary and Friday how-to guides. I publish occasional extra articles. If you’d like to become a paid subscriber, here’s a button for that:

Your opinion about AI is irrelevant. The hiring manager’s opinion is the one that pays your rent, and I think it’s pretty clear what the average recruiter thinks when you look at job postings, full of AI-related requirements that didn’t exist 18-36 months ago: complex prompt techniques, multi-agent workflows, RAG pipelines, LLM integration, OpenClaw, MCP, experience with GPT-5.3-Codex or Claude Code 4.6 Opus, Google Antigravity, etc. These requirements were written by people who may not fully understand them (and couldn’t care less either way). It doesn’t matter.

This is not a piece about whether AI is good or bad. Whether it’s overhyped or underhyped (is that possible?). Whether the productivity gains are real or imaginary. None of that matters for what I’m about to tell you, because the job market has already made its decision, and that decision is binding whether it’s correct or not.

You’d do well to remember this fact: if your future livelihood does not depend solely on yourself, then your beliefs are not the only thing that defines your fate. Make sure you understand what the world wants from you and deliver, regardless of how you feel about it.

I. THE NUMBERS ON THE WALL

The number of workers in occupations where AI fluency is explicitly required has grown sevenfold in two years—from roughly 1 million in 2023 to around 7 million in 2025, according to McKinsey. Job postings requiring AI skills surged 73% from 2023 to 2024 and then another 109% from 2024 to 2025. In IT specifically, 78% of job postings now mention AI-related skills, according to a Cisco report. AI-related job postings grew 7.5% last year, per PwC, even as total job postings fell 11.3%.

The overall job market is contracting while the AI-skilled slice of it is expanding. If you’re inside that slice, the odds are tilting in your favor. If you’re outside it… Well, you really should not be outside it.

It’s not just tech roles.

Four of the top ten occupations now requiring AI expertise are non-technical: marketing managers, market research analysts, sales managers, and chemists (if this list sounds arbitrary is because it is: every job that uses either a lot of data or a computer will eventually require AI skills). The skills employers are asking for in AI-exposed jobs are changing 66% faster than in other roles. The premium for having AI skills in your résumé—doing the same job, same title, same seniority, etc.—is 56%, up from 25% last year.

If you happen to be employed by one such company rather than looking for a job, you might 1) not feel the urge to update, or 2) expect them to train you. Well, don’t: Forrester’s research found that only 16% of workers have high “AI readiness.” Companies aren’t investing in training. Only 23% of AI decision-makers say their organizations offered prompt engineering—perhaps the simplest among the required skills—last year.

A 56% wage premium for listing the right words on a résumé is absolutely crazy. I say “listing the right words” because let’s be honest here: you don’t need to like AI as a technology. Your enthusiasm may buy you some sympathy, but at the end of the day, what your employer wants from you is familiarity and proficiency.

II. THE SKEPTIC’S COUNTER

Now, I can already hear the objections, and some of them are perfectly reasonable: Job postings are aspirational documents, not accurate descriptions of what people do.

The HR department of your average company copy-pastes requirements from templates. Hiring managers add “AI skills” the way they once added “Office,” and then “Transferable Skills,” and then “Full Stack.” It’s a checkbox more than a hard filter. Plenty of people get hired without half the skills listed on the posting. We’ve all been asked for ten years of experience in a framework that’s existed for three (generative AI is, by the way, a good example of this: we are still so early).

There’s data to support the skepticism, too. A study published this month by the National Bureau of Economic Research surveyed 6,000 CEOs and executives. The results are rather surprising if we compare with those in the previous section: two-thirds of their firms use AI (ok, cool), but the average usage amounts to 1.5 hours per week (hmm, not much), and there’s near-zero measurable impact on productivity or employment (wait, what?).

Apollo’s chief economist, Torsten Slok, put it memorably: “AI is everywhere except in the incoming macroeconomic data. You don’t see AI in the employment data, productivity data, or inflation data.” This might sound familiar. It’s exactly what economist and Nobel laureate Robert Solow observed about the computer, which he famously captured in this slogan: “You can see the computer age everywhere but in the productivity statistics.”

So maybe the job postings are posturing. Maybe the AI requirements are theater, a corporate performance of modernity that evaporates the moment someone without the presumed qualifications but genuinely competent walks in the door for an interview.

Maybe.

III. DOES IT EVEN MATTER?

Let me repeat that: does it even matter? Does it matter that the postings are mostly fake? Does it matter that AI is not providing productivity gains across the board? The answer is a resounding NO. Let me tell you why. Carve this in your forehead. You will want to remember this every single time you look in the mirror.

You know your boss. And perhaps the boss of your boss. You know that the higher you go in the org chart, the more likely it is that the people in those roles adore AI. Quite literally: I know managers and random CEOs who are more excited about AI than Sam Altman, which is a rather impressive bar to clear.

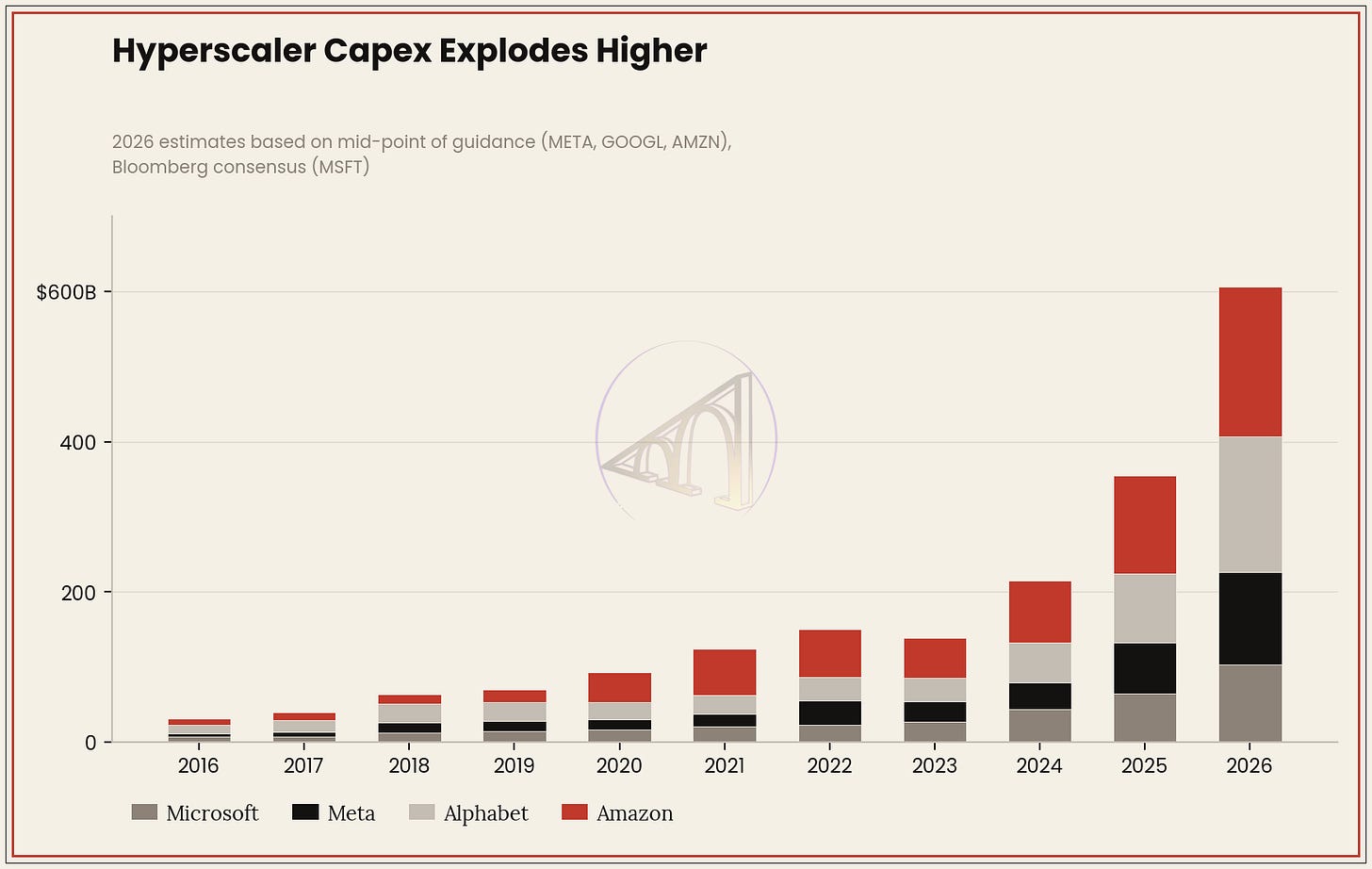

They’ve read the McKinsey, PwC, etc. reports and sat through the board presentations showing, with fancy charts, how AI will transform their industry. They have committed real budget: $650 billion in AI infrastructure spending this year across the big four tech firms alone (Google, Amazon, Microsoft, and Meta), so imagine how much they expect to sell to their clients, one of which might be your employer. The ripple effects are reaching every Fortune 500 company’s annual plan.

These people have thoroughly bought the story, even if you didn’t. You might be the smartest person around in not believing a word coming from AI leaders—I’m not judging—but it doesn’t matter if your CEO bought the story. And tell me: how many CEOs do you know who didn’t? Whether they’re eventually right—whether AI delivers the promised revolution one year from now or becomes a “normal technology” that takes a decade to diffuse throughout the economy or proves too faulty and unreliable to be deployed at scale—is secondary.

Because your fate depends on someone who’s already made up his mind.

This is the part that skeptics consistently miss. They argue about whether AI works (which is a worthwhile and important debate, insofar as it asks the right questions: where AI excels and to what degree). But what determines your professional future isn’t what works. It’s what the people with hiring and firing power believe works. And those people believe, and now they’re starting to make it clear in their job postings, that AI works.

A decade from now, having AI skills will be like having good “oral and written communication” skills or teamwork or whatever. You are either in or you are out, no nuance or second chances.

When 45% of hiring managers in tech say employees with outdated skills could be laid off, your personal assessment of the technology’s merits becomes an academic exercise: nice to reflect on and write about, but ultimately a vain attempt to secure your rent.

You might be right that AI coding tools make experienced developers 19% slower (a real finding from a rigorous randomized controlled trial by METR, which, as far as I can tell, has not been thoroughly debunked, even though the experimental design has some issues). You might be right that most companies using AI report no measurable productivity gains. (The reports on this are mixed. On the one hand, you have studies like that one from MIT that found 95% of AI pilots fail, but on the other hand, you have studies like this one from Wharton that reached opposite conclusions: 75% of enterprises are already seeing positive ROI on generative AI.)

I’m emphasizing this and repeating the same ideas over and over because you may not realize it now, but your job options might depend on it. I’m really sorry that this was imposed on you, that you resist the official narrative with reasons in your hand: You can be right about it all and still be unemployable, because the labor market doesn’t run on truth but on supply and demand.

All in all, the enthusiasts and the skeptics are heading toward very different futures, and not for the reasons either camp thinks.

The enthusiast who has spent the last two years tinkering with AI tools, learning prompt engineering, building workflows and pipelines, and experimenting with coding agents may or may not be more productive than the skeptic. The evidence is genuinely unclear and premature. But that person can walk into an interview and speak fluently about something the interviewer cares deeply about. AI enthusiasts fit the story the company is telling itself about its future.

The skeptic who has—perhaps correctly—identified that AI’s impact on productivity is still unproven, that many “AI transformations” are corporate theater—adding a spark logo to your app is not enough—that the hype cycle has outrun reality may be analytically right. But they cannot speak the language; they haven’t built the muscle memory or the nice-sounding portfolio.

When they sit across from a hiring manager who has staked their department’s budget on an AI strategy, they represent the past. Or worse: those preventing the company’s future.

Fifty-five percent of companies that replaced workers with AI now regret it. They’re quietly rehiring. Eventually, I assume they won’t be rehiring the same people at the same salaries, but people who can manage the AI that was supposed to replace the original workers. This is not the ideal modus operandi—better not to fire out of FOMO who you actually need, right?—but it is, ultimately, the incarnation of that annoying maxim: AI won’t take your job, a person using AI will.

The job came back, yes, but not the job description.

IV. WHAT TO DO ABOUT THIS

Being right about the technology and being competitive in the labor market are two different games, and right now they’re moving in opposite directions. Here is what I’d tell anyone who has been sitting out the AI wave, whether out of skepticism, exhaustion, or principle:

The minimum viable investment is smaller than you think. You don’t need to become an AI engineer (I am far from one). You need to be able to describe, honestly and specifically, how you’ve used AI tools in your actual work. One concrete example of a problem you solved faster or better because you reached for an AI tool instead of doing it the old way (and even also one concrete example of how AI failed to give you what you wanted faster or better). That’s the bar for most roles outside of dedicated AI positions. It’s achievable in a month without disrupting your routine.

The language matters more than the skill. Much of what appears on job postings as “AI skills” is really “familiarity with AI tools” dressed up in intimidating jargon. “Experience with LLM-based workflows” means you’ve used ChatGPT for something. “Prompt engineering” means you’ve learned to give clear instructions. “AI-augmented decision-making” means you’ve used AI to analyze data before making a recommendation. These are names for practices that many people are already doing informally (including skeptics!). Don’t let semantics stop you when you only need practice.

The skeptic’s advantage is real, but hidden. The person who understands AI’s limitations—the hallucinations, the performance-without-competence, the plausible-but-wrong outputs, the unearned confidence, etc.—or who, at the very least, is on the lookout for them, is, in a way, more valuable than the uncritical enthusiast. Companies are learning this the hard way. The “almost right but not quite” problem is the number-one developer—and writer, I can attest to that—frustration. The best people can use AI tools while maintaining rigorous judgment about their output.

The window is still open, but it’s narrowing. The 56% wage premium for AI skills that I mentioned in the beginning tells you something about the supply-demand equilibrium right now: there aren’t enough people who can credibly claim AI competence to fill the roles that are asking for it. That premium will compress as more people skill up. The advantage of moving now is that the bar is still relatively low and the reward is disproportionately high.

V. CLOSING THOUGHTS

The deepest irony of the current moment is this: AI may or may not transform the economy. The jury is legitimately out. But it has already transformed the labor market through expectation. Companies haven’t replaced many workers with AI. But they have started replacing their mental model of what a desirable worker looks like, and that new model includes AI fluency as a default assumption.

This is not the first time a technology has reshaped hiring before it reshaped work. Companies asked for “computer skills” long before most jobs required them. They asked for “social media experience” long before most marketing was done on social media. Hiring is both a bet and a forecast: they bet that you will embody the ideal worker of their forecasted future, which, right now, calls for only one thing: AI.

You can fight the narrative, or you can adapt to it. But you cannot ignore it, because the narrative is writing the job postings, the job postings are filtering the applicants, and the applicants who pass the filter are the ones who get the paychecks, which pay your rent and your food and—not to rub salt in the wound—your AI subscriptions.

By the time the productivity data settles the argument about whether AI was worth the hype, the people who waited for the answer will have spent years on the wrong side of history.

The 56% wage premium makes sense but masks a bigger gap. Most "AI skills" on job postings mean "can write prompts." The real premium - the one that'll widen - is for people who build with AI daily.

I've been running an autonomous agent for months. Not a side experiment - actual infrastructure that manages tasks, deploys code, works night shifts. The distance between "I use ChatGPT" and "I built a system that fixes itself at 3 AM" is enormous.

Documented what that learning curve actually looks like here: https://thoughts.jock.pl/p/wiz-1-5-ai-agent-dashboard-native-app-2026

The market will figure out this distinction. Question is how fast job descriptions catch up.

If the hype is real, then believers will also be unemployable on marginally longer timescales.